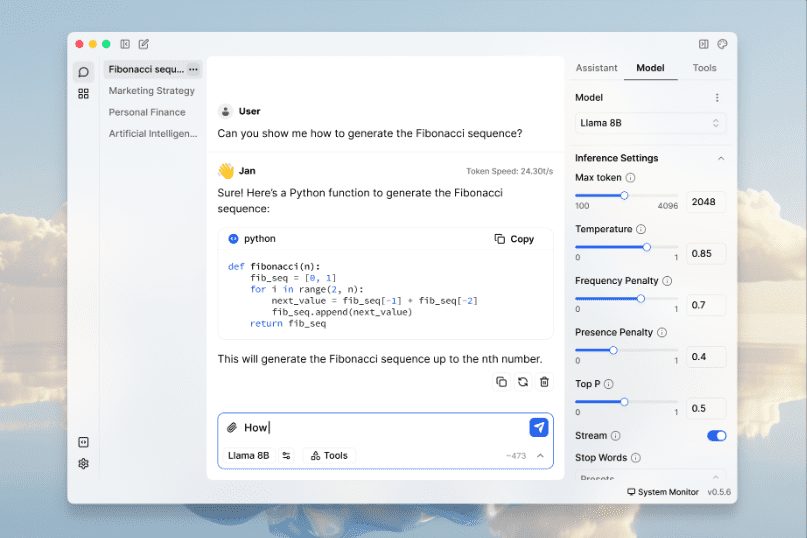

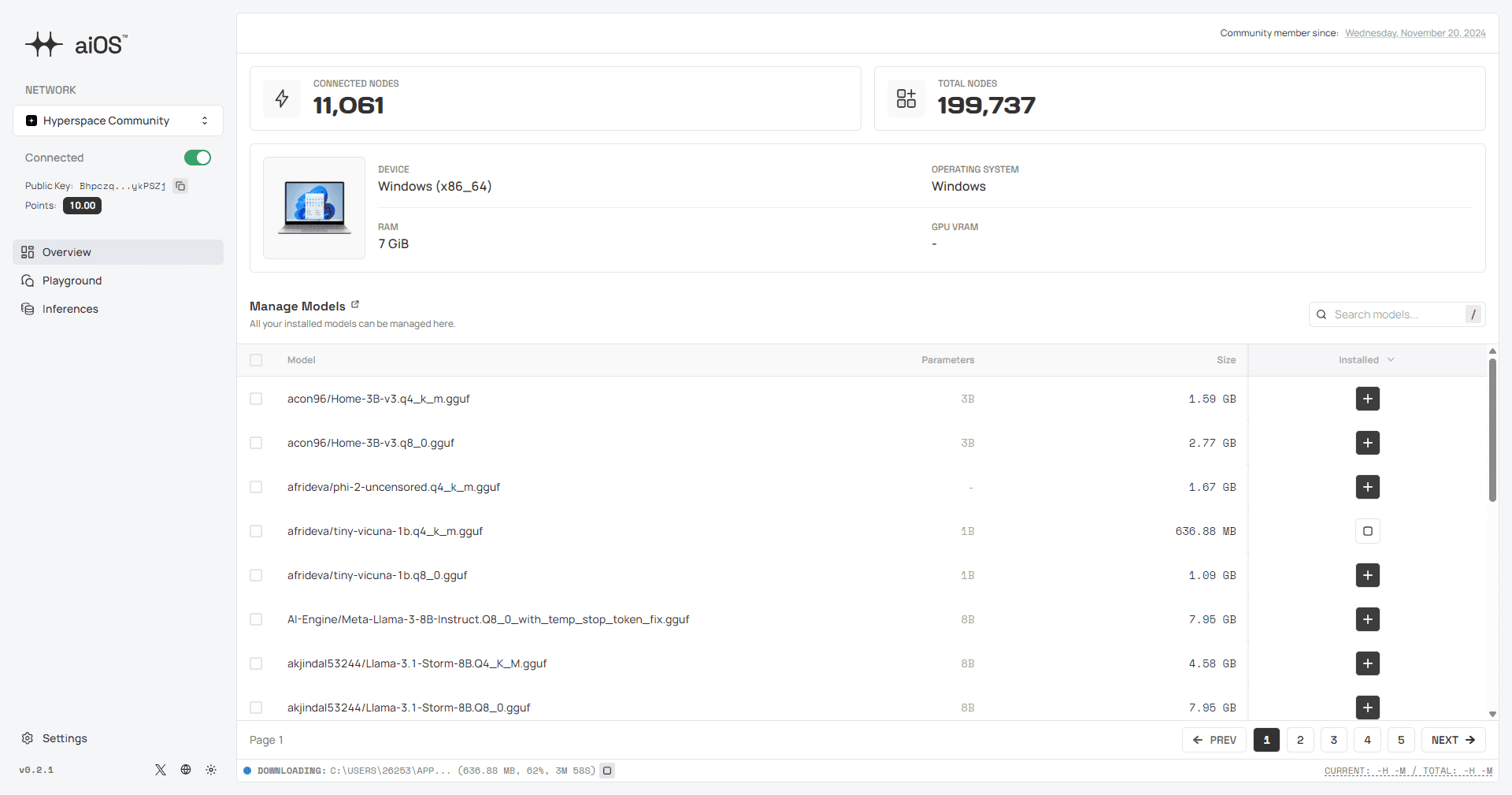

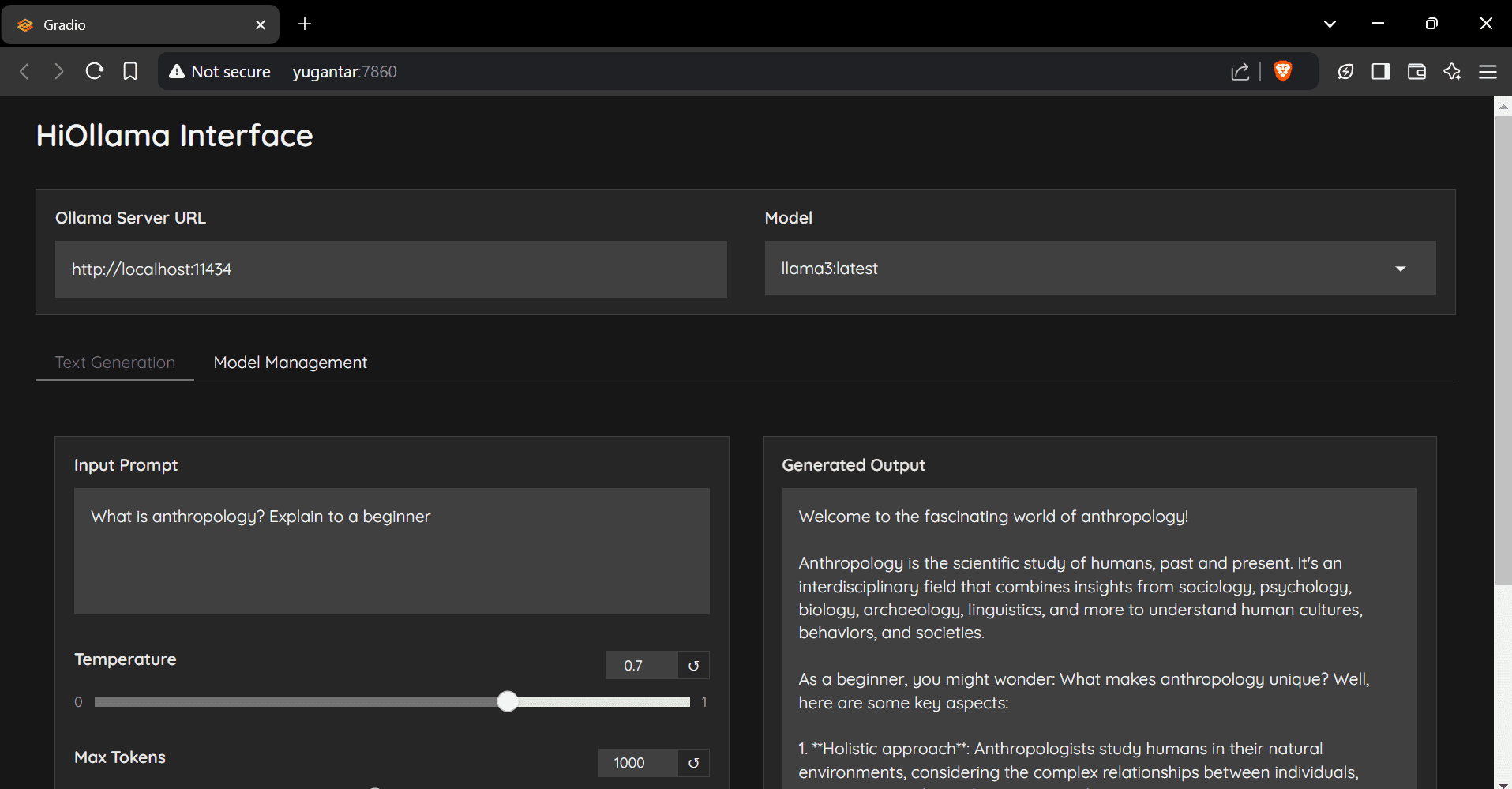

llama.cpp: efficient inference tool, supports multiple hardware, easy to implement LLM inference

General Introduction llama.cpp is a library implemented in pure C/C++ designed to simplify the inference process for Large Language Models (LLMs). It supports a wide range of hardware platforms, including Apple Silicon, NVIDIA GPUs, and AMD GPUs, and provides a variety of quant...