Kimi-Dev - Dark Side of the Moon Open Source Code Modeling

What's Kimi-Dev?

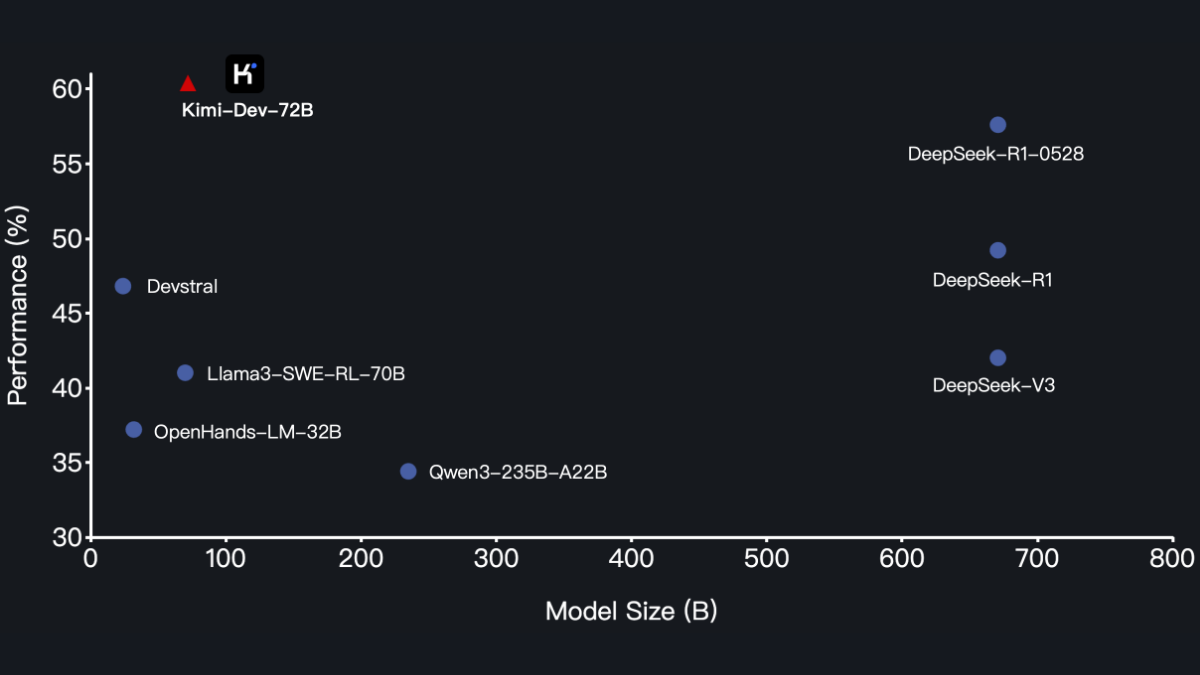

Kimi-Dev is an open source code model from Moonshot AI designed for software engineering with a 72B parameter count. The model has a powerful BugFixer function, which can automatically locate and fix code errors, and the model provides TestWriter capability to generate high-quality unit tests for existing code to ensure code quality. Based on reinforcement learning and self-gaming mechanism, Kimi-Dev achieves a performance of 60.4% on SWE-bench Verified dataset, surpassing other open source models and becoming the current SOTA. Kimi-Dev has been widely used in programming education and open source project maintenance, helping beginners to learn programming quickly, and helping open source projects to improve quality and stability. Kimi-Dev is widely used in programming education and open source project maintenance, helping beginners learn programming quickly and helping open source projects improve quality and stability.

Kimi-Dev's main functions

- Code Fixer (BugFixer): Automatically detect vulnerabilities and errors in code, generate fixes, and quickly resolve issues during development.

- Test code generation (TestWriter): Automatically generate unit test code for existing code to ensure correct and stable code functionality.

- Development Process Automation: Introducing reinforcement learning and self-gaming mechanisms to efficiently coordinate code fixing and testing capabilities and improve overall development efficiency.

- Development Tools Integration: In the future, we plan to work seamlessly with mainstream IDEs, version control systems, and CI/CD pipelines to deeply integrate into the development workflow.

Kimi-Dev's performance

- In the SWE-bench Verified dataset::

- Compared to the open source model, the model achieves a performance of 60.4%, outperforming all other open source models and becoming the SOTA (State of the Art) among current open source models.

- Compared to closed-source models, Kimi-Dev has come close to or even surpassed some closed-source models in some aspects, showing strong competitiveness.

Kimi-Dev's official website address

- Project website::https://moonshotai.github.io/Kimi-Dev/

- GitHub repository::https://github.com/MoonshotAI/Kimi-Dev

- HuggingFace Model Library::https://huggingface.co/moonshotai/Kimi-Dev-72B

How to use Kimi-Dev

- Download model weights and codes::

- Download the model's code and associated scripts from the GitHub repository.

- Download model weights from the Hugging Face model library.

- Installation of dependencies: Install the necessary dependencies in your local environment. the Kimi-Dev code repository provides a requirements.txt file to install the dependencies based on the following commands:

pip install -r requirements.txt- Configuration environment: Make sure Python is installed on your system (recommended version 3.8 or higher). Set up a virtual environment (optional):

python -m venv kimi-dev-env

source kimi-dev-env/bin/activate # Linux/Mac

kimi-dev-env\Scripts\activate # Windows- Installation of deep learning frameworks: Install the corresponding framework according to the model requirements.

- Loading Models: Load the model weights according to the code example provided. Example:

from transformers import AutoModelForSeq2SeqLM, AutoTokenizer

model_name = "moonshotai/Kimi-Dev-72B"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForSeq2SeqLM.from_pretrained(model_name)- Using the Model Function::

- Code Fixer (BugFixer): Input the problematic code snippet into the model, and the model automatically generates the repaired code. Example code:

buggy_code = "def add(a, b): return a - b" # 错误的代码

inputs = tokenizer(buggy_code, return_tensors="pt")

outputs = model.generate(**inputs)

fixed_code = tokenizer.decode(outputs[0], skip_special_tokens=True)

print("Fixed Code:", fixed_code)- Test code generation (TestWriter): Enter the code of the function that needs to generate test code, and the model automatically generates the corresponding unit test code.

code_to_test = "def add(a, b): return a + b"

inputs = tokenizer(code_to_test, return_tensors="pt")

outputs = model.generate(**inputs)

test_code = tokenizer.decode(outputs[0], skip_special_tokens=True)

print("Test Code:", test_code)Kimi-Dev's core strengths

- powerful performance: With 72B parameter count, Kimi-Dev achieves a performance of 60.41 TP3T on the SWE-bench Verified dataset, outperforming other open source models as the current SOTA.

- Efficient Code Fixing: Based on reinforcement learning and self-gaming mechanisms, Kimi-Dev can automatically locate and fix code errors, significantly improving repair efficiency.

- Test Code Generation: Generate high-quality unit test code for existing code, improve test coverage and reduce developers' workload in writing test code.

- Open Source and Flexibility: Based on the MIT protocol open source , users are free to use , modify and distribute , suitable for a variety of development needs .

- Development Tools Integration: In the future, it will seamlessly integrate with mainstream IDEs, version control systems and CI/CD pipelines to improve development efficiency.

Who Kimi-Dev is for

- Software Development Engineer: Need to quickly fix code bugs, generate test code, and improve development efficiency.

- Programming for beginners: Based on the generation of sample code and test code to assist learning, rapid mastery of programming skills.

- Open source project maintainers: Help users quickly fix bugs, optimize code, and improve project quality and stability.

- Enterprise Development Team: Used in enterprise-level development projects to reduce development costs and improve overall development efficiency.

- Technical researchers: Research and extension based on its open source code and models to explore new technological directions.

- educator: Used in programming instruction to help students better understand and practice code development and testing.

© Copyright notes

Article copyright AI Sharing Circle All, please do not reproduce without permission.

Related posts

No comments...