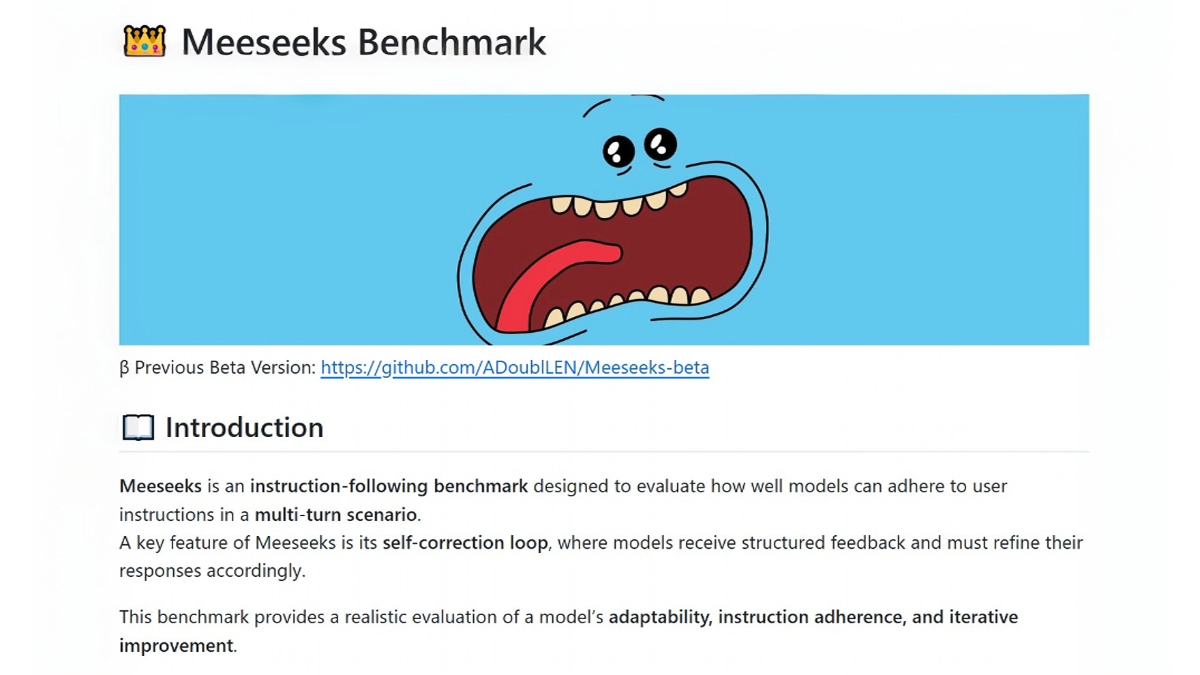

Meeseeks - Meeseeks open-source assessment set for evaluating the ability to follow model instructions

What's Meeseeks?

Meeseeks is an open-source large model evaluation set used by Meituan M17 team to evaluate the model's ability to follow instructions.Meeseeks uses a three-tiered evaluation framework to measure whether the model can strictly follow the user's instructions in generating answers from the macroscopic to the microscopic level, and it does not evaluate the knowledge correctness of the answers.Meeseeks introduces the multiple rounds of error correction mode, which allows the model to make corrections after receiving feedback and evaluate its self-correcting ability. Meeseeks' data design is more challenging, which can effectively widen the gap between different models and provide optimization direction for model developers.

Features of Meeseeks

- Directive Compliance Capability Assessment: Meeseeks uses a three-tier evaluation framework to comprehensively measure a model's ability to follow user instructions, from macro task intent to micro detailed rules, to ensure that the answers generated by the model strictly comply with the instructions.

- multiround error correction modeMeeseeks automatically generates feedback if the model does not fully satisfy the instructions, pointing out the problem and asking the model to correct it, assessing the ability to self-correct.

- Objective assessment criteria: All assessment items are objectively determinable criteria to ensure consistency and accuracy of the results.

- Difficult data design: Test cases are more challenging and can effectively bridge the gap between different models, providing developers with direction for optimization.

Meeseeks' core strengths

- Innovative multi-round feedback mechanisms: Meeseeks' unique multi-round error correction mode can evaluate the initial performance of the model, examine its self-correction ability after multiple feedbacks, and provide a basis for the dynamic optimization of the model.

- Objective and scalable rubrics: The evaluation criteria are objective and clear, easy to expand and customize, and can meet the evaluation requirements of different scenarios and needs.

- Real business data driven: Constructed based on real business data, it ensures that the evaluation results are highly relevant to the actual application and provides a reliable reference for the model's performance in real scenarios.

- High level of difficulty and differentiation: Evaluating the complex and challenging data design can effectively differentiate the command following ability of different models and provide strong support for model selection and optimization.

What is Meeseeks' official website?

- GitHub repository:: https://github.com/ADoublLEN/Meeseeks

- HuggingFace Model Library:: https://huggingface.co/datasets/meituan/Meeseeks

Who Meeseeks is for

- Artificial intelligence researchers: Provide a standardized evaluation benchmark to help researchers assess and compare the command adherence capabilities of different large models, and provide a reference for model development and optimization.

- Model Developer: Through a multi-round error correction model and a fine-grained evaluation framework, developers are able to identify model deficiencies and perform targeted optimization to improve model performance.

- Corporate Technical Team: Enterprise teams that generate content or provide services using large models, assess whether the model meets the business requirements, and select the appropriate model for deployment.

- educator: In the field of education to help educators assess whether model-generated instructional content meets instructional requirements and to provide support for the application of educational technology.

- content creator: Content creators who generate high-quality content (e.g., copy, reviews, stories, etc.) with the help of a large model to assess the model's generative power and improve the efficiency and quality of content creation.

© Copyright notes

Article copyright AI Sharing Circle All, please do not reproduce without permission.

Related posts

No comments...