What is the MCP Open Protocol?

That's what we're doing at Anthropic An open standard developed to address the core challenge of Large Language Model (LLM) applications - connecting them to your data.

There is no need to build custom integrations for each data source.MCP provides one protocol to connect all data sources:

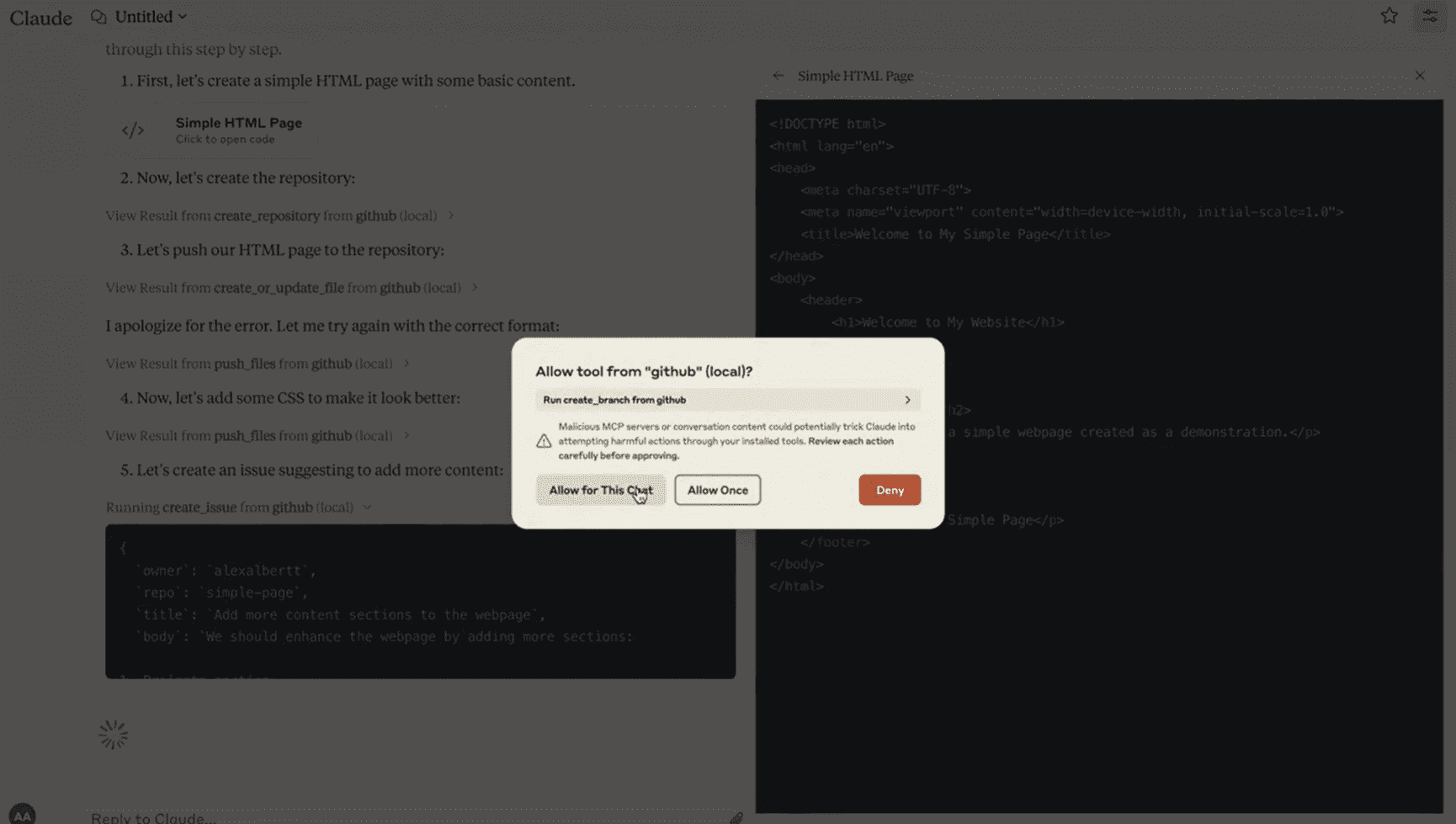

The following is a guide to using the Claude A quick demo of the desktop application in which we configured MCP:

Watch as Claude connects directly to GitHub, creates a new codebase (repo) with a simple MCP Integration initiates a PR.

After setting up the MCP in the Claude desktop application, it took less than an hour to build this integration.

Getting LLM to interact with external systems is often not easy.

Currently, every developer needs to write custom code to connect their LLM application to a data source. This is a tedious and repetitive task.

MCP solves this problem through a standardized protocol for sharing resources, tools, and tips.

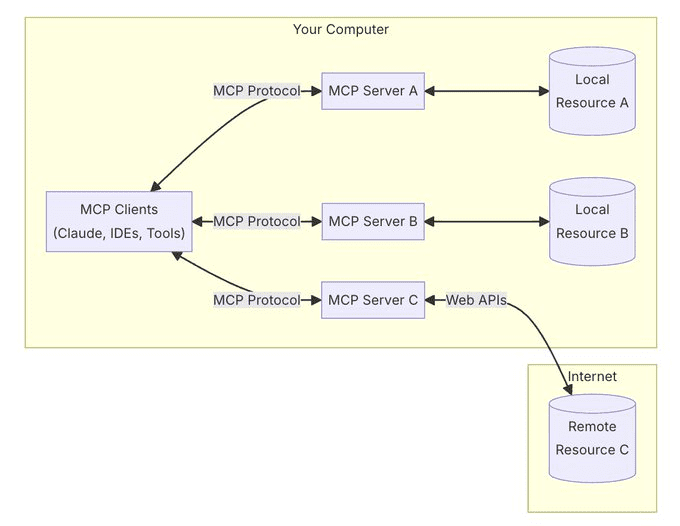

At its core, MCP follows a client-server architecture in which multiple services can connect to any compatible client.

Clients are applications similar to Claude desktop applications, IDEs, or AI tools. Servers are lightweight adapters that are responsible for exposing data sources.

One of the powerful things about MCP is that it can handle both local resources (your databases, files, services) and remote resources (APIs like Slack or GitHub) through the same protocol.

An MCP server does not only share data. In addition to resources (files, documents, data), they can also be exposed:

- Tools (API integration, operations)

- Hints (templated interactions)

Security is integrated into the protocol - servers control their own resources, there is no need to share API keys with LLM providers, and system boundaries are clear.

Currently, MCP only supports local deployment - the server must be running on your machine.

However, we are developing features to support remote servers and provide enterprise-level authentication so that teams can securely share their contextual sources across the organization.

We're building a world where AI can connect to any data source through a single elegant protocol - MCP is the universal translator.

Integrate MCP into your client once and connect to any data source at any time.

Get started with MCP in less than 5 minutes - we've built servers for GitHub, Slack, SQL databases, local files, search engines, and more.

Install the Claude desktop application and follow our step-by-step guide to connect your first server:

https://modelcontextprotocol.io/quickstart

Just as LSP did for IDEs, we are building MCP into an open standard for LLM integration.

Build your own server, contribute to the protocol, and together shape the future of AI integration:

https://github.com/modelcontextprotocol

© Copyright notes

Article copyright AI Sharing Circle All, please do not reproduce without permission.

Related posts

No comments...