Chatterbox-Turbo - Resemble AI开源的文本到语音模型

Chatterbox-Turbo 是 Resemble AI 推出的开源文本到语音(TTS)模型,专为高效、低延迟的语音合成而设计。基于350M参数的精简架构,单步推理生成音频,时间延迟极低,在150...

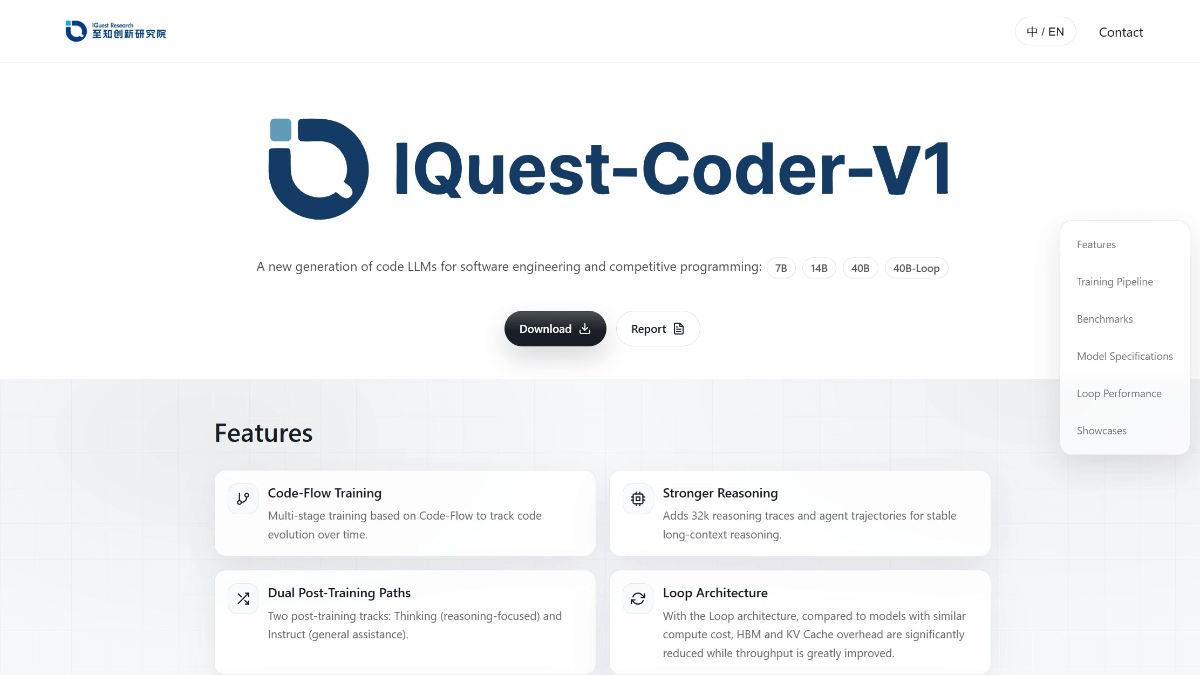

IQuest-Coder-V1 - 至知创新研究院开源的代码大模型系列

IQuest-Coder-V1是九坤投资旗下至知创新研究院研发的开源代码大模型系列,专注于代码智能领域,具备自动编程、Bug修复和代码解释等能力。模型采用创新的Code-Flow训练范式,从代码库演化...

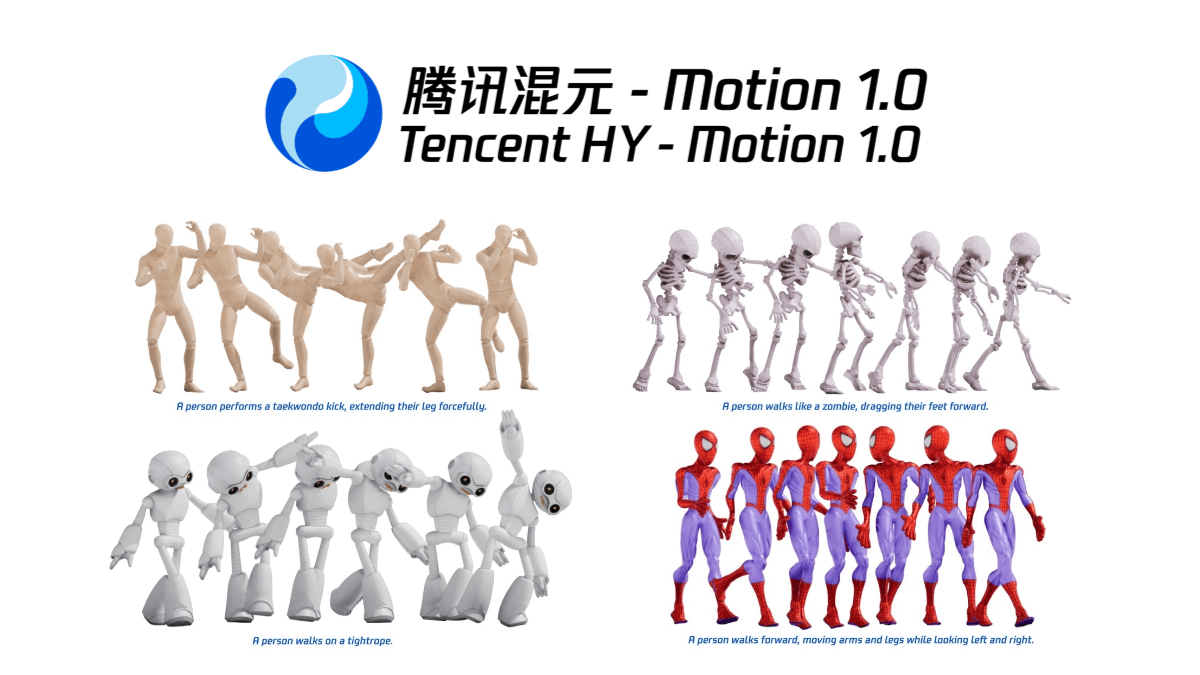

Mixed Motion 1.0 - Tencent Mixed Motion team open source text to generate 3D action models

Hybrid Motion1.0 (HY-Motion1.0) is Tencent Hybrid team open source text generated 3D action model , using 1 billion parameters Diffusion Transformer architecture , can be directly generated through natural language description of high-quality 3D character animation .

Yume1.5 - An Interactive World Generation Model Open-Sourced by Shanghai AI Lab and Fudan University

Yume 1.5 is an open source interactive world generation model, jointly developed by Shanghai Artificial Intelligence Laboratory, Fudan University, and Shanghai Innovation Research Institute, which is capable of real-time interactive rendering (12 FPS on a single card). It adopts the joint spatio-temporal channel modeling (TSCM) technology, even if the context length increases...

AutoMV - M-A-P open source free music video generation system in conjunction with the North Post, South University, etc.

AutoMV is an open source music video generation system developed by the M-A-P team in collaboration with several universities, which can automatically generate coherent music videos based on complete songs without training.It adopts a multi-intelligence body collaboration model, including music analysis, scriptwriting, directing, and quality control modules, and can accurately analyze the lyrics, beats, and...

Tencent-HY-MT1.5 - Tencent hybrid open source translation model series

Tencent-HY-MT1.5 is Tencent hybrid open source translation model version 1.5, including 1.8B and 7B two models, support for 33 international languages and 5 kinds of folk Chinese/dialect translation.1.8B model is specially optimized for cell phones and other consumer-grade devices, only 1GB of RAM can be achieved end-side...

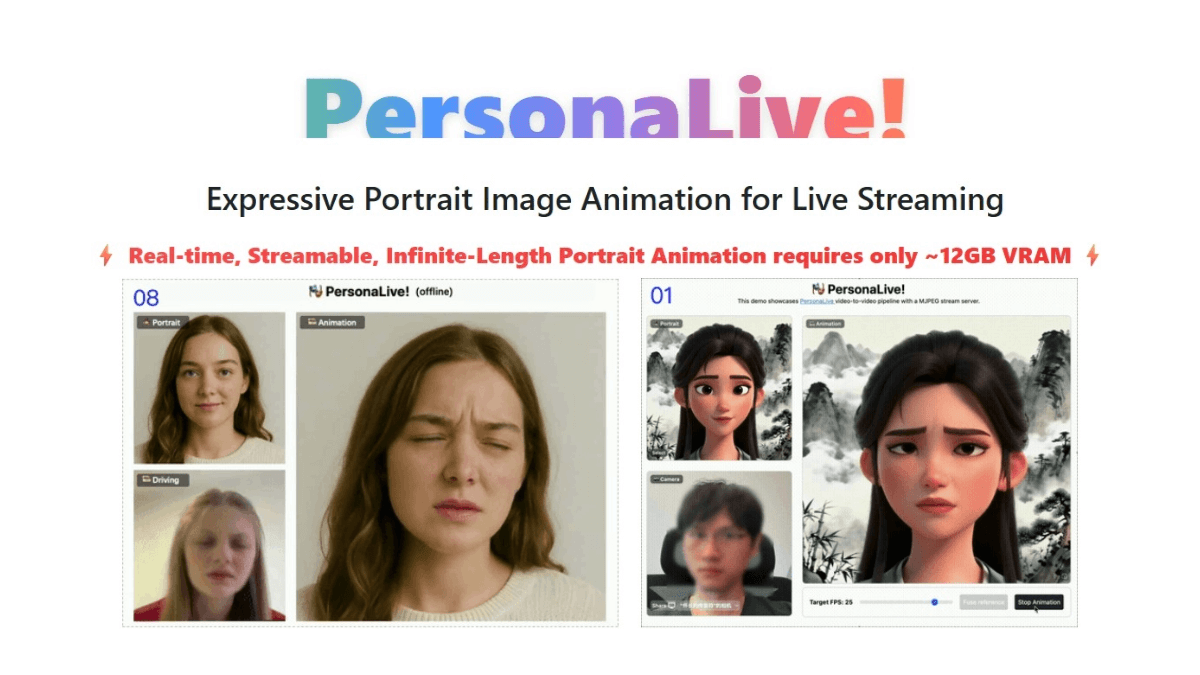

PersonaLive - The University of Macau and other open source real-time AI portrait animation generation live framework

PersonaLive is an open source real-time AI face-swapping live streaming framework, jointly developed by the University of Macau, dzine.ai, and the GVC Lab at the University of the Greater Bay Area. It can realize low-latency and high frame rate digital person drive on ordinary consumer-grade graphics cards (12GB video memory), and support real-time through the camera...

Computer Use Preview - Google's open source AI browser automation tool

Computer Use Preview is Google's open source AI browser automation tool based on the Gemini model , through natural language commands to achieve web page interaction . Using "screenshot→analysis→execution" visual recognition process , support Playwrigh...

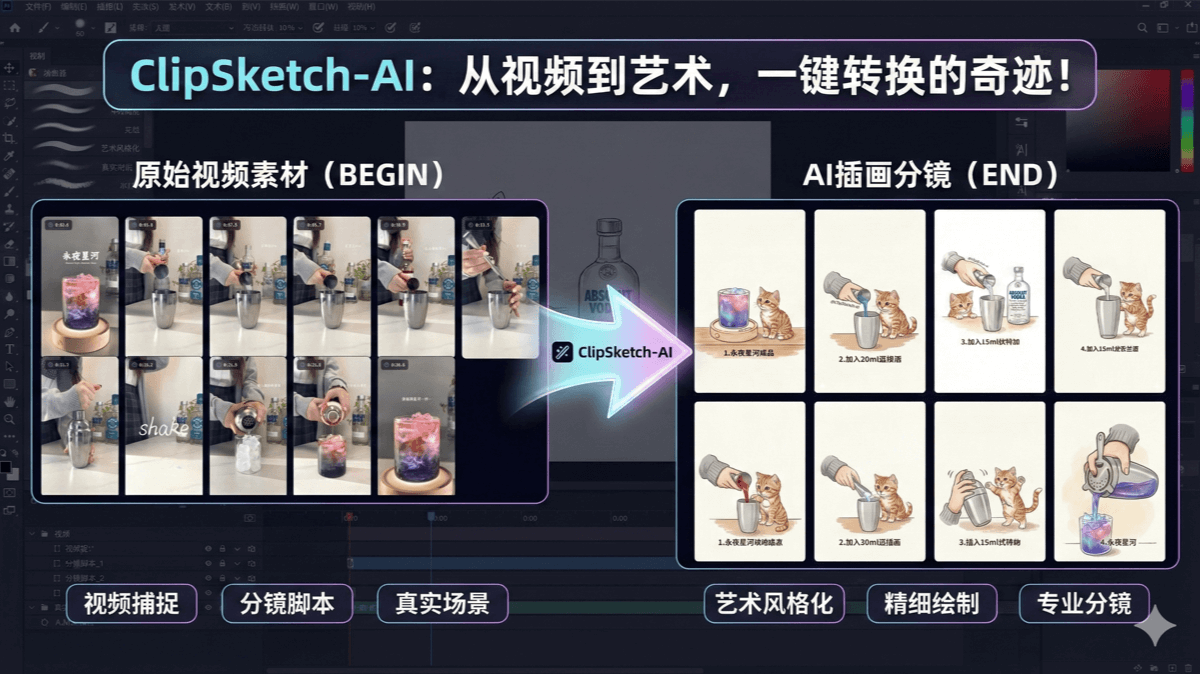

ClipSketch AI - Open source AI video to hand-drawn split-screen tool, support B station, small red book

ClipSketch AI is open source video to hand-drawn split-screen tool designed for short video creators. It can convert videos from B station, Little Red Book and other platforms into hand-drawn style storyboards with one click, support marking key frames, automatic generation of sub-scenes and social copy, and can integrate user-defined roles.

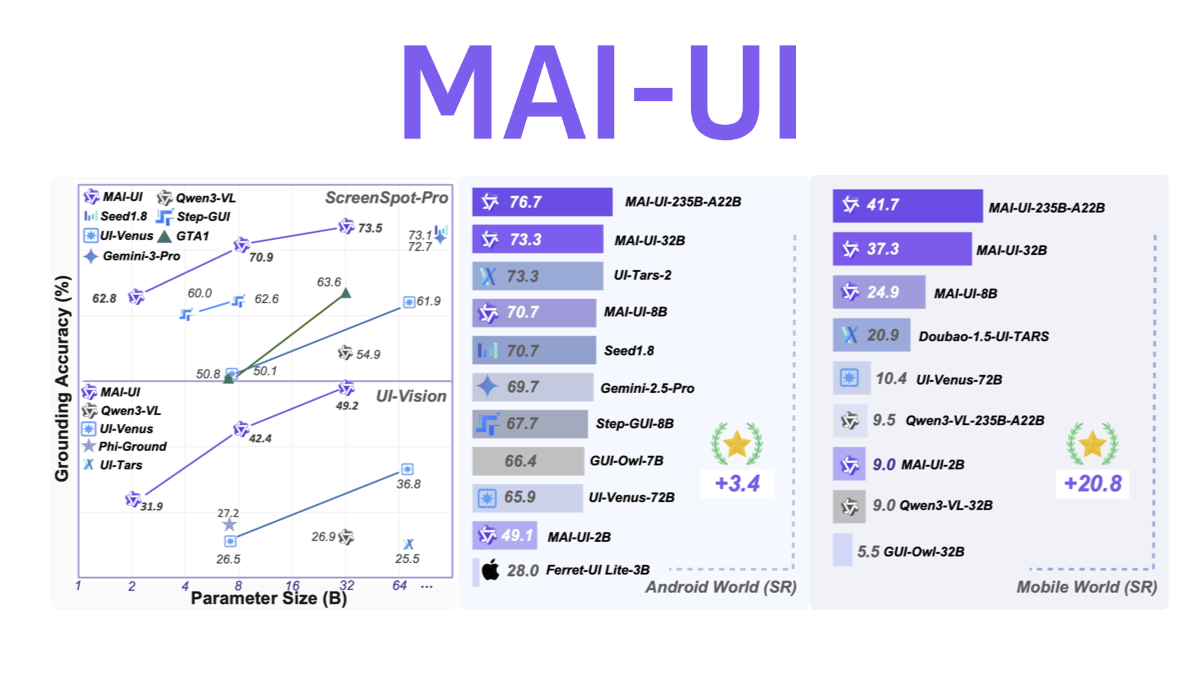

MAI-UI - Ali Tongyi Labs Open Source Universal GUI Intelligent Body Base Model

MAI-UI is an open source generalized GUI intelligent body base model from Alibaba Tongyi Labs, with four major capabilities: cross-application operation, fuzzy semantic understanding, active user interaction and multi-step process coordination. Adopting end-cloud collaboration architecture, the lightweight model resides in the device to handle daily tasks, and complex tasks can call the cloud big...