cognee: uma estrutura de código aberto para a construção de RAGs com base em gráficos de conhecimento, aprendizagem de prompts centrais

Introdução geral

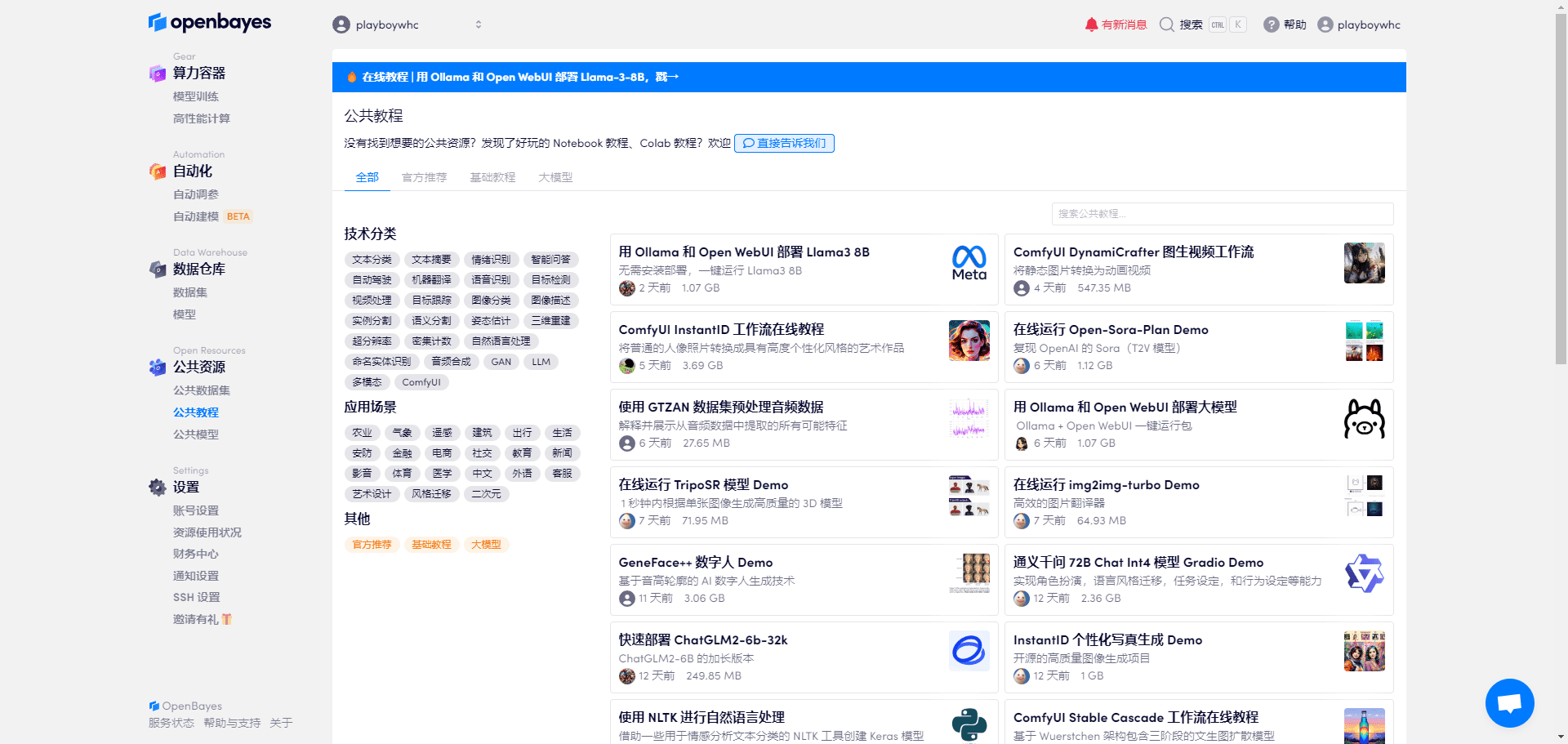

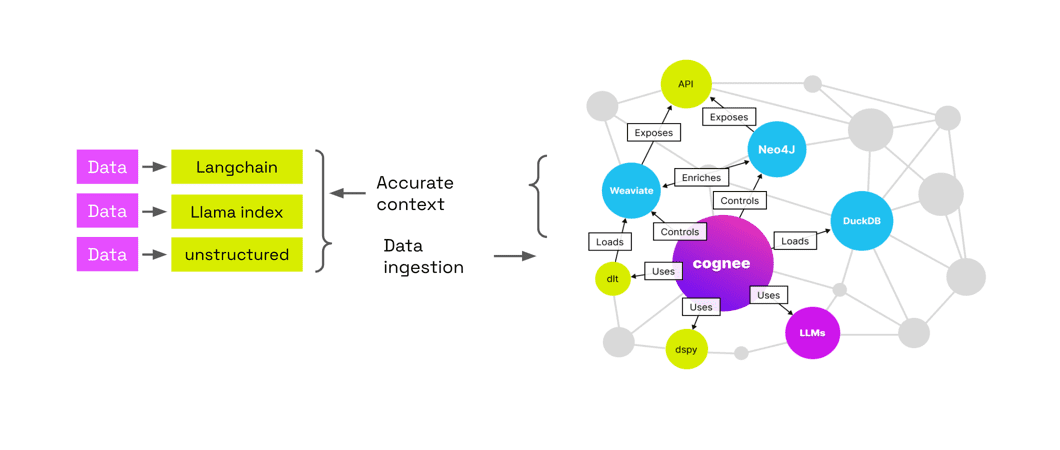

O Cognee é uma solução de camada de dados confiável projetada para aplicativos de IA e agentes de IA. Projetado para carregar e construir contextos LLM (Large Language Models) para criar soluções de IA precisas e interpretáveis por meio de gráficos de conhecimento e armazenamentos de vetores. A estrutura facilita a economia de custos, a interpretabilidade e o controle orientado pelo usuário, tornando-a adequada para pesquisa e uso educacional. O site oficial oferece tutoriais introdutórios, visões gerais conceituais, materiais de aprendizagem e informações de pesquisa relacionadas.

O maior ponto forte do cognee é jogar dados para ele e, em seguida, processá-los automaticamente, criar um gráfico de conhecimento e reconectar os gráficos de tópicos relacionados para ajudá-lo a descobrir melhor as conexões nos dados, bem como os RAG Oferece o máximo em interpretabilidade quando se trata de LLMs.

1. adicionar dados, identificar e processar automaticamente os dados com base no LLM, extrair para o Knowledge Graph e armazenar dados banco de dados de vetores 2 As vantagens são: economia de dinheiro, interpretabilidade - visualização gráfica dos dados, controlabilidade - integração ao código etc.

Lista de funções

- Tubulação ECLPermite a extração, a cognição e o carregamento de dados, suporta a interconexão e a recuperação de dados históricos.

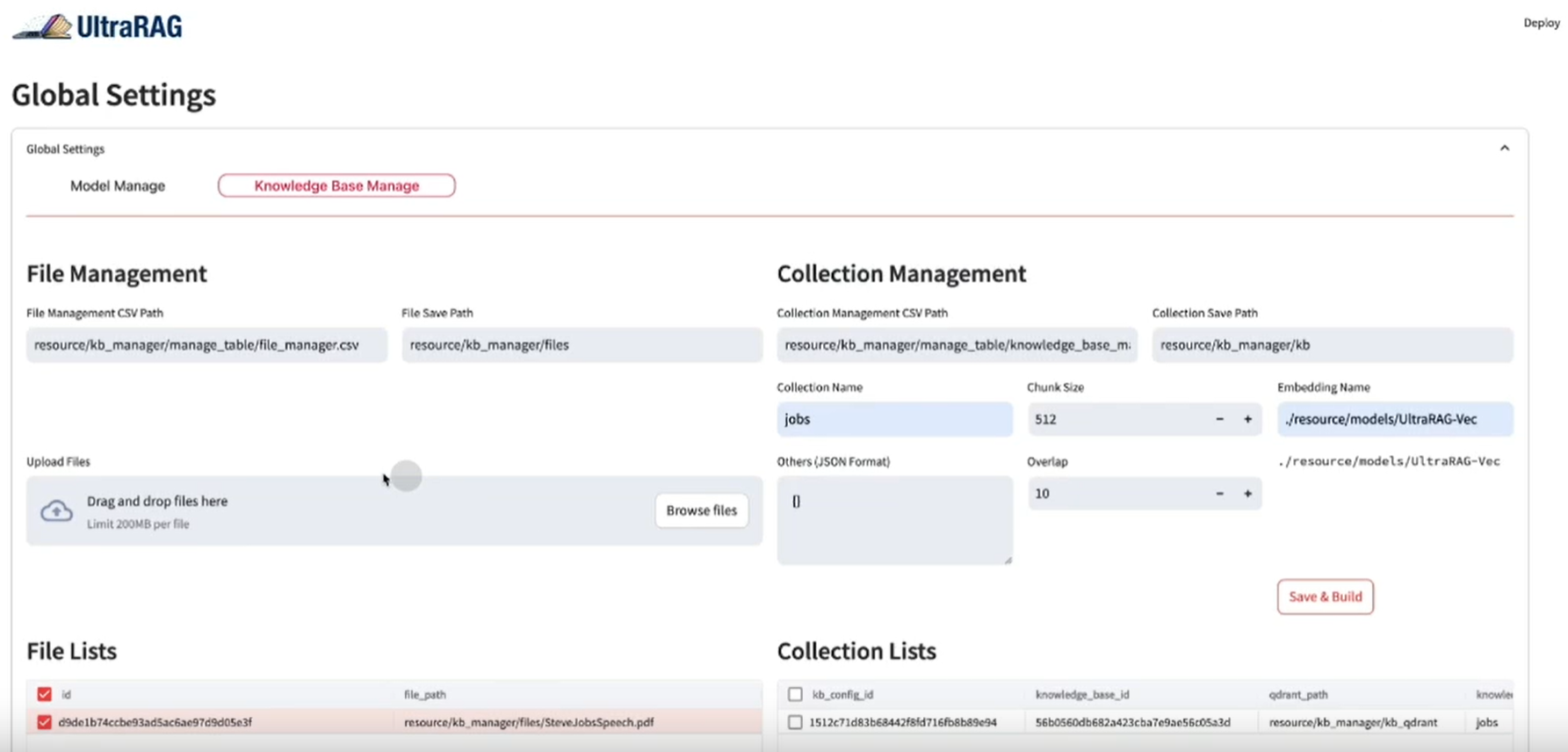

- Suporte a vários bancos de dadosSuporte para PostgreSQL, Weaviate, Qdrant, Neo4j, Milvus e outros bancos de dados.

- Redução de alucinações: Redução de fenômenos fantasmas em aplicativos de IA por meio da otimização do design do pipeline.

- Compatível com o desenvolvedorFornecimento de documentação detalhada e exemplos para reduzir o limite para os desenvolvedores.

- escalabilidadeDesign modular para fácil expansão e personalização.

Usando a Ajuda

Processo de instalação

- Instalação usando o pip::

pip install cogneeOu instale um suporte específico para o banco de dados:

pip install 'cognee[<database>]'Por exemplo, instale o suporte ao PostgreSQL e ao Neo4j:

pip install 'cognee[postgres, neo4j]' - Instalação com poesia::

poetry add cogneeOu instale um suporte específico para o banco de dados:

poetry add cognee -E <database>Por exemplo, instale o suporte ao PostgreSQL e ao Neo4j:

poetry add cognee -E postgres -E neo4j

Processo de uso

- Configuração da chave da API::

import os os.environ["LLM_API_KEY"] = "YOUR_OPENAI_API_KEY"Ou:

import cognee cognee.config.set_llm_api_key("YOUR_OPENAI_API_KEY") - Criação de arquivos .envCrie um arquivo .env e defina a chave da API:

LLM_API_KEY=YOUR_OPENAI_API_KEY - Uso de diferentes provedores de LLMConsulte a documentação para saber como configurar diferentes provedores de LLM.

- Resultados da visualizaçãoSe estiver usando a rede, crie uma conta do Graphistry e configure-a:

cognee.config.set_graphistry_config({ "username": "YOUR_USERNAME", "password": "YOUR_PASSWORD" })

Funções principais

- extração de dadosExtraia dados usando o pipeline ECL da Cognee, que suporta várias fontes e formatos de dados.

- Conhecimento dos dadosProcessamento e análise de dados por meio do módulo cognitivo do Cognee para reduzir as alucinações.

- Carregamento de dadosCarga de dados processados em um banco de dados ou armazenamento de destino, com suporte a uma ampla variedade de bancos de dados e armazenamentos vetoriais.

Funções em destaque Procedimento de operação

- Interconexão e recuperação de dados históricosInterconexão e recuperação fáceis de conversas anteriores, documentos e transcrições de áudio usando o design modular da Cognee.

- Redução da carga de trabalho do desenvolvedorFornecimento de documentação e exemplos detalhados para diminuir o limite dos desenvolvedores e reduzir o tempo e os custos de desenvolvimento.

Visite o site oficial para obter mais informações sobre os quadros cognee

Leia uma visão geral sobre como dominar os fundamentos teóricos do conhecimento

Veja tutoriais e materiais didáticos para começar

Comando do prompt principal

classify_content: conteúdo classificado

You are a classification engine and should classify content. Make sure to use one of the existing classification options nad not invent your own.

The possible classifications are:

{

"Natural Language Text": {

"type": "TEXT",

"subclass": [

"Articles, essays, and reports",

"Books and manuscripts",

"News stories and blog posts",

"Research papers and academic publications",

"Social media posts and comments",

"Website content and product descriptions",

"Personal narratives and stories"

]

},

"Structured Documents": {

"type": "TEXT",

"subclass": [

"Spreadsheets and tables",

"Forms and surveys",

"Databases and CSV files"

]

},

"Code and Scripts": {

"type": "TEXT",

"subclass": [

"Source code in various programming languages",

"Shell commands and scripts",

"Markup languages (HTML, XML)",

"Stylesheets (CSS) and configuration files (YAML, JSON, INI)"

]

},

"Conversational Data": {

"type": "TEXT",

"subclass": [

"Chat transcripts and messaging history",

"Customer service logs and interactions",

"Conversational AI training data"

]

},

"Educational Content": {

"type": "TEXT",

"subclass": [

"Textbook content and lecture notes",

"Exam questions and academic exercises",

"E-learning course materials"

]

},

"Creative Writing": {

"type": "TEXT",

"subclass": [

"Poetry and prose",

"Scripts for plays, movies, and television",

"Song lyrics"

]

},

"Technical Documentation": {

"type": "TEXT",

"subclass": [

"Manuals and user guides",

"Technical specifications and API documentation",

"Helpdesk articles and FAQs"

]

},

"Legal and Regulatory Documents": {

"type": "TEXT",

"subclass": [

"Contracts and agreements",

"Laws, regulations, and legal case documents",

"Policy documents and compliance materials"

]

},

"Medical and Scientific Texts": {

"type": "TEXT",

"subclass": [

"Clinical trial reports",

"Patient records and case notes",

"Scientific journal articles"

]

},

"Financial and Business Documents": {

"type": "TEXT",

"subclass": [

"Financial reports and statements",

"Business plans and proposals",

"Market research and analysis reports"

]

},

"Advertising and Marketing Materials": {

"type": "TEXT",

"subclass": [

"Ad copies and marketing slogans",

"Product catalogs and brochures",

"Press releases and promotional content"

]

},

"Emails and Correspondence": {

"type": "TEXT",

"subclass": [

"Professional and formal correspondence",

"Personal emails and letters"

]

},

"Metadata and Annotations": {

"type": "TEXT",

"subclass": [

"Image and video captions",

"Annotations and metadata for various media"

]

},

"Language Learning Materials": {

"type": "TEXT",

"subclass": [

"Vocabulary lists and grammar rules",

"Language exercises and quizzes"

]

},

"Audio Content": {

"type": "AUDIO",

"subclass": [

"Music tracks and albums",

"Podcasts and radio broadcasts",

"Audiobooks and audio guides",

"Recorded interviews and speeches",

"Sound effects and ambient sounds"

]

},

"Image Content": {

"type": "IMAGE",

"subclass": [

"Photographs and digital images",

"Illustrations, diagrams, and charts",

"Infographics and visual data representations",

"Artwork and paintings",

"Screenshots and graphical user interfaces"

]

},

"Video Content": {

"type": "VIDEO",

"subclass": [

"Movies and short films",

"Documentaries and educational videos",

"Video tutorials and how-to guides",

"Animated features and cartoons",

"Live event recordings and sports broadcasts"

]

},

"Multimedia Content": {

"type": "MULTIMEDIA",

"subclass": [

"Interactive web content and games",

"Virtual reality (VR) and augmented reality (AR) experiences",

"Mixed media presentations and slide decks",

"E-learning modules with integrated multimedia",

"Digital exhibitions and virtual tours"

]

},

"3D Models and CAD Content": {

"type": "3D_MODEL",

"subclass": [

"Architectural renderings and building plans",

"Product design models and prototypes",

"3D animations and character models",

"Scientific simulations and visualizations",

"Virtual objects for AR/VR environments"

]

},

"Procedural Content": {

"type": "PROCEDURAL",

"subclass": [

"Tutorials and step-by-step guides",

"Workflow and process descriptions",

"Simulation and training exercises",

"Recipes and crafting instructions"

]

}

}

generate_cog_layers: gera camadas cognitivas

You are tasked with analyzing `{{ data_type }}` files, especially in a multilayer network context for tasks such as analysis, categorization, and feature extraction. Various layers can be incorporated to capture the depth and breadth of information contained within the {{ data_type }}.

These layers can help in understanding the content, context, and characteristics of the `{{ data_type }}`.

Your objective is to extract meaningful layers of information that will contribute to constructing a detailed multilayer network or knowledge graph.

Approach this task by considering the unique characteristics and inherent properties of the data at hand.

VERY IMPORTANT: The context you are working in is `{{ category_name }}` and the specific domain you are extracting data on is `{{ category_name }}`.

Guidelines for Layer Extraction:

Take into account: The content type, in this case, is: `{{ category_name }}`, should play a major role in how you decompose into layers.

Based on your analysis, define and describe the layers you've identified, explaining their relevance and contribution to understanding the dataset. Your independent identification of layers will enable a nuanced and multifaceted representation of the data, enhancing applications in knowledge discovery, content analysis, and information retrieval.

generate_graph_prompt: gera prompts de gráficos

You are a top-tier algorithm

designed for extracting information in structured formats to build a knowledge graph.

- **Nodes** represent entities and concepts. They're akin to Wikipedia nodes.

- **Edges** represent relationships between concepts. They're akin to Wikipedia links.

- The aim is to achieve simplicity and clarity in the

knowledge graph, making it accessible for a vast audience.

YOU ARE ONLY EXTRACTING DATA FOR COGNITIVE LAYER `{{ layer }}`

## 1. Labeling Nodes

- **Consistency**: Ensure you use basic or elementary types for node labels.

- For example, when you identify an entity representing a person,

always label it as **"Person"**.

Avoid using more specific terms like "mathematician" or "scientist".

- Include event, entity, time, or action nodes to the category.

- Classify the memory type as episodic or semantic.

- **Node IDs**: Never utilize integers as node IDs.

Node IDs should be names or human-readable identifiers found in the text.

## 2. Handling Numerical Data and Dates

- Numerical data, like age or other related information,

should be incorporated as attributes or properties of the respective nodes.

- **No Separate Nodes for Dates/Numbers**:

Do not create separate nodes for dates or numerical values.

Always attach them as attributes or properties of nodes.

- **Property Format**: Properties must be in a key-value format.

- **Quotation Marks**: Never use escaped single or double quotes within property values.

- **Naming Convention**: Use snake_case for relationship names, e.g., `acted_in`.

## 3. Coreference Resolution

- **Maintain Entity Consistency**:

When extracting entities, it's vital to ensure consistency.

If an entity, such as "John Doe", is mentioned multiple times

in the text but is referred to by different names or pronouns (e.g., "Joe", "he"),

always use the most complete identifier for that entity throughout the knowledge graph.

In this example, use "John Doe" as the entity ID.

Remember, the knowledge graph should be coherent and easily understandable,

so maintaining consistency in entity references is crucial.

## 4. Strict Compliance

Adhere to the rules strictly. Non-compliance will result in termination"""

read_query_prompt: lê o prompt da consulta

from os import path

import logging

from cognee.root_dir import get_absolute_path

def read_query_prompt(prompt_file_name: str):

"""Read a query prompt from a file."""

try:

file_path = path.join(get_absolute_path("./infrastructure/llm/prompts"), prompt_file_name)

with open(file_path, "r", encoding = "utf-8") as file:

return file.read()

except FileNotFoundError:

logging.error(f"Error: Prompt file not found. Attempted to read: %s {file_path}")

return None

except Exception as e:

logging.error(f"An error occurred: %s {e}")

return None

render_prompt: prompt de renderização

from jinja2 import Environment, FileSystemLoader, select_autoescape

from cognee.root_dir import get_absolute_path

def render_prompt(filename: str, context: dict) -> str:

"""Render a Jinja2 template asynchronously.

:param filename: The name of the template file to render.

:param context: The context to render the template with.

:return: The rendered template as a string."""

# Set the base directory relative to the cognee root directory

base_directory = get_absolute_path("./infrastructure/llm/prompts")

# Initialize the Jinja2 environment to load templates from the filesystem

env = Environment(

loader = FileSystemLoader(base_directory),

autoescape = select_autoescape(["html", "xml", "txt"])

)

# Load the template by name

template = env.get_template(filename)

# Render the template with the provided context

rendered_template = template.render(context)

return rendered_template

summarize_content: conteúdo resumido

You are a summarization engine and you should sumamarize content. Be brief and concise

© declaração de direitos autorais

Direitos autorais do artigo Círculo de compartilhamento de IA A todos, favor não reproduzir sem permissão.

Artigos relacionados

Nenhum comentário...