Creación de una aplicación RAG nativa con Ollama+LangChain

Tutoriales prácticos sobre IAPublicado hace 1 año Círculo de intercambio de inteligencia artificial 69.7K 00

El tutorial asume que usted ya está familiarizado con los siguientes conceptos.

- Modelos de chat

- Encadenamiento de ejecutables

- Incrustaciones

- Almacenes vectoriales

- Generación mejorada por recuperación

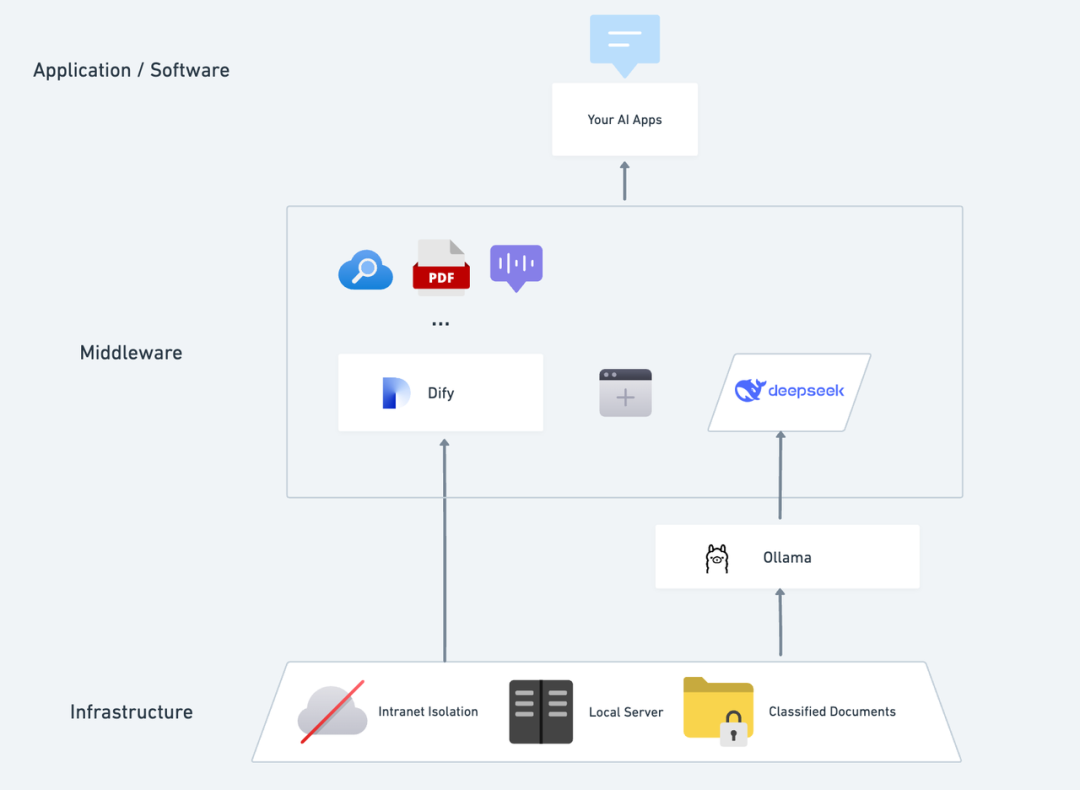

Muchos artículos populares como llama.cpp , Ollama y llamafile muestra la importancia de ejecutar grandes modelos lingüísticos en entornos locales.

LangChain es similar a una serie de Proveedores de LLM de código abierto Hay integraciones, y Ollama es una de ellas.

Entorno

En primer lugar, tenemos que configurar el entorno.

El repositorio GitHub de Ollama ofrece una descripción detallada, que se resume a continuación.

- Descargue y ejecute la aplicación Ollama

- Desde la línea de comandos, consulte la lista de modelos de Ollama y la sección Lista de modelos de incrustación de texto Sacar el modelo. En ese tutorial, tomamos el

llama3.1:8bresponder cantandonomic-embed-textEjemplo.- entrada de línea de comandos

ollama pull llama3.1:8bModelos lingüísticos genéricos de código abiertollama3.1:8b - entrada de línea de comandos

ollama pull nomic-embed-texttire de Modelo de incrustación de textonomic-embed-text

- entrada de línea de comandos

- Cuando la aplicación se esté ejecutando, todos los modelos estarán automáticamente en la carpeta

localhost:11434ir río arriba - Tenga en cuenta que la selección del modelo debe tener en cuenta las capacidades de su hardware local, el tamaño de la memoria de vídeo de referencia para este tutorial

GPU Memory > 8GB

A continuación, instale los paquetes necesarios para la incrustación local, el almacenamiento de vectores y la inferencia de modelos.

# langchain_community

%pip install -qU langchain langchain_community

# Chroma

%pip install -qU langchain_chroma

# Ollama

%pip install -qU langchain_ollama

Note: you may need to restart the kernel to use updated packages.

Note: you may need to restart the kernel to use updated packages.

Note: you may need to restart the kernel to use updated packages.

También puede ver esta página para obtener una lista completa de los modelos de incrustación disponibles

Carga de documentos

Ahora vamos a cargar y dividir un documento de ejemplo.

Utilizaremos el artículo de Lilian Weng sobre el agente blog (préstamo) A modo de ejemplo.

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import WebBaseLoader

loader = WebBaseLoader("https://lilianweng.github.io/posts/2023-06-23-agent/")

data = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=500, chunk_overlap=0)

all_splits = text_splitter.split_documents(data)

A continuación, se inicializa el almacén de vectores. El modelo de incrustación de texto que utilizamos es nomic-embed-text .

from langchain_chroma import Chroma

from langchain_ollama import OllamaEmbeddings

local_embeddings = OllamaEmbeddings(model="nomic-embed-text")

vectorstore = Chroma.from_documents(documents=all_splits, embedding=local_embeddings)

Ahora tenemos una base de datos vectorial local. Probemos brevemente la búsqueda de similitudes.

question = "What are the approaches to Task Decomposition?"

docs = vectorstore.similarity_search(question)

len(docs)

4

docs[0]

Document(metadata={'description': 'Building agents with LLM (large language model) as its core controller is a cool concept. Several proof-of-concepts demos, such as AutoGPT, GPT-Engineer and BabyAGI, serve as inspiring examples. The potentiality of LLM extends beyond generating well-written copies, stories, essays and programs; it can be framed as a powerful general problem solver.\nAgent System Overview In a LLM-powered autonomous agent system, LLM functions as the agent’s brain, complemented by several key components:', 'language': 'en', 'source': 'https://lilianweng.github.io/posts/2023-06-23-agent/', 'title': "LLM Powered Autonomous Agents | Lil'Log"}, page_content='Task decomposition can be done (1) by LLM with simple prompting like "Steps for XYZ.\\n1.", "What are the subgoals for achieving XYZ?", (2) by using task-specific instructions; e.g. "Write a story outline." for writing a novel, or (3) with human inputs.')

A continuación, instanciamos el gran modelo lingüístico llama3.1:8b y comprobar si el razonamiento del modelo funciona correctamente:

from langchain_ollama import ChatOllama

model = ChatOllama(

model="llama3.1:8b",

)

response_message = model.invoke(

"Simulate a rap battle between Stephen Colbert and John Oliver"

)

print(response_message.content)

**The scene is set: a packed arena, the crowd on their feet. In the blue corner, we have Stephen Colbert, aka "The O'Reilly Factor" himself. In the red corner, the challenger, John Oliver. The judges are announced as Tina Fey, Larry Wilmore, and Patton Oswalt. The crowd roars as the two opponents face off.**

**Stephen Colbert (aka "The Truth with a Twist"):**

Yo, I'm the king of satire, the one they all fear

My show's on late, but my jokes are clear

I skewer the politicians, with precision and might

They tremble at my wit, day and night

**John Oliver:**

Hold up, Stevie boy, you may have had your time

But I'm the new kid on the block, with a different prime

Time to wake up from that 90s coma, son

My show's got bite, and my facts are never done

**Stephen Colbert:**

Oh, so you think you're the one, with the "Last Week" crown

But your jokes are stale, like the ones I wore down

I'm the master of absurdity, the lord of the spin

You're just a British import, trying to fit in

**John Oliver:**

Stevie, my friend, you may have been the first

But I've got the skill and the wit, that's never blurred

My show's not afraid, to take on the fray

I'm the one who'll make you think, come what may

**Stephen Colbert:**

Well, it's time for a showdown, like two old friends

Let's see whose satire reigns supreme, till the very end

But I've got a secret, that might just seal your fate

My humor's contagious, and it's already too late!

**John Oliver:**

Bring it on, Stevie! I'm ready for you

I'll take on your jokes, and show them what to do

My sarcasm's sharp, like a scalpel in the night

You're just a relic of the past, without a fight

**The judges deliberate, weighing the rhymes and the flow. Finally, they announce their decision:**

Tina Fey: I've got to go with John Oliver. His jokes were sharper, and his delivery was smoother.

Larry Wilmore: Agreed! But Stephen Colbert's still got that old-school charm.

Patton Oswalt: You know what? It's a tie. Both of them brought the heat!

**The crowd goes wild as both opponents take a bow. The rap battle may be over, but the satire war is just beginning...

Construcción de expresiones en cadena

Podemos pasar el documento recuperado y un simple prompt para construir un summarization chain .

Formatea la plantilla de consulta utilizando los valores de clave de entrada suministrados y pasa la cadena formateada al modelo especificado:

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

prompt = ChatPromptTemplate.from_template(

"Summarize the main themes in these retrieved docs: {docs}"

)

# 将传入的文档转换成字符串的形式

def format_docs(docs):

return "\n\n".join(doc.page_content for doc in docs)

chain = {"docs": format_docs} | prompt | model | StrOutputParser()

question = "What are the approaches to Task Decomposition?"

docs = vectorstore.similarity_search(question)

chain.invoke(docs)

'The main themes in these documents are:\n\n1. **Task Decomposition**: The process of breaking down complex tasks into smaller, manageable subgoals is crucial for efficient task handling.\n2. **Autonomous Agent System**: A system powered by Large Language Models (LLMs) that can perform planning, reflection, and refinement to improve the quality of final results.\n3. **Challenges in Planning and Decomposition**:\n\t* Long-term planning and task decomposition are challenging for LLMs.\n\t* Adjusting plans when faced with unexpected errors is difficult for LLMs.\n\t* Humans learn from trial and error, making them more robust than LLMs in certain situations.\n\nOverall, the documents highlight the importance of task decomposition and planning in autonomous agent systems powered by LLMs, as well as the challenges that still need to be addressed.'

Control de calidad simple

from langchain_core.runnables import RunnablePassthrough

RAG_TEMPLATE = """

You are an assistant for question-answering tasks. Use the following pieces of retrieved context to answer the question. If you don't know the answer, just say that you don't know. Use three sentences maximum and keep the answer concise.

<context>

{context}

</context>

Answer the following question:

{question}"""

rag_prompt = ChatPromptTemplate.from_template(RAG_TEMPLATE)

chain = (

RunnablePassthrough.assign(context=lambda input: format_docs(input["context"]))

| rag_prompt

| model

| StrOutputParser()

)

question = "What are the approaches to Task Decomposition?"

docs = vectorstore.similarity_search(question)

# Run

chain.invoke({"context": docs, "question": question})

'Task decomposition can be done through (1) simple prompting using LLM, (2) task-specific instructions, or (3) human inputs. This approach helps break down large tasks into smaller, manageable subgoals for efficient handling of complex tasks. It enables agents to plan ahead and improve the quality of final results through reflection and refinement.'

Control de calidad con búsqueda

Por último, nuestra aplicación de control de calidad con recuperación semántica (local RAG ) que puede recuperar automáticamente los fragmentos de documentos semánticamente más similares de una base de datos vectorial a partir de las preguntas del usuario:

retriever = vectorstore.as_retriever()

qa_chain = (

{"context": retriever | format_docs, "question": RunnablePassthrough()}

| rag_prompt

| model

| StrOutputParser()

)

question = "What are the approaches to Task Decomposition?"

qa_chain.invoke(question)

'Task decomposition can be done through (1) simple prompting in Large Language Models (LLM), (2) using task-specific instructions, or (3) with human inputs. This process involves breaking down large tasks into smaller, manageable subgoals for efficient handling of complex tasks.'

resúmenes

Enhorabuena, llegados a este punto, has implementado completamente una aplicación RAG construida sobre el framework Langchain y modelos locales. Puedes usar el tutorial como base para reemplazar el modelo local y experimentar con los efectos y capacidades de diferentes modelos, o extenderlo aún más para enriquecer las capacidades y expresividad de la aplicación, o añadir características más útiles e interesantes.

© declaración de copyright

Derechos de autor del artículo Círculo de intercambio de inteligencia artificial Todos, por favor no reproducir sin permiso.

Artículos relacionados

Sin comentarios...