AnimeGamer: An Open Source Tool for Generating Anime Videos and Character Interactions with Language Commands

General Introduction

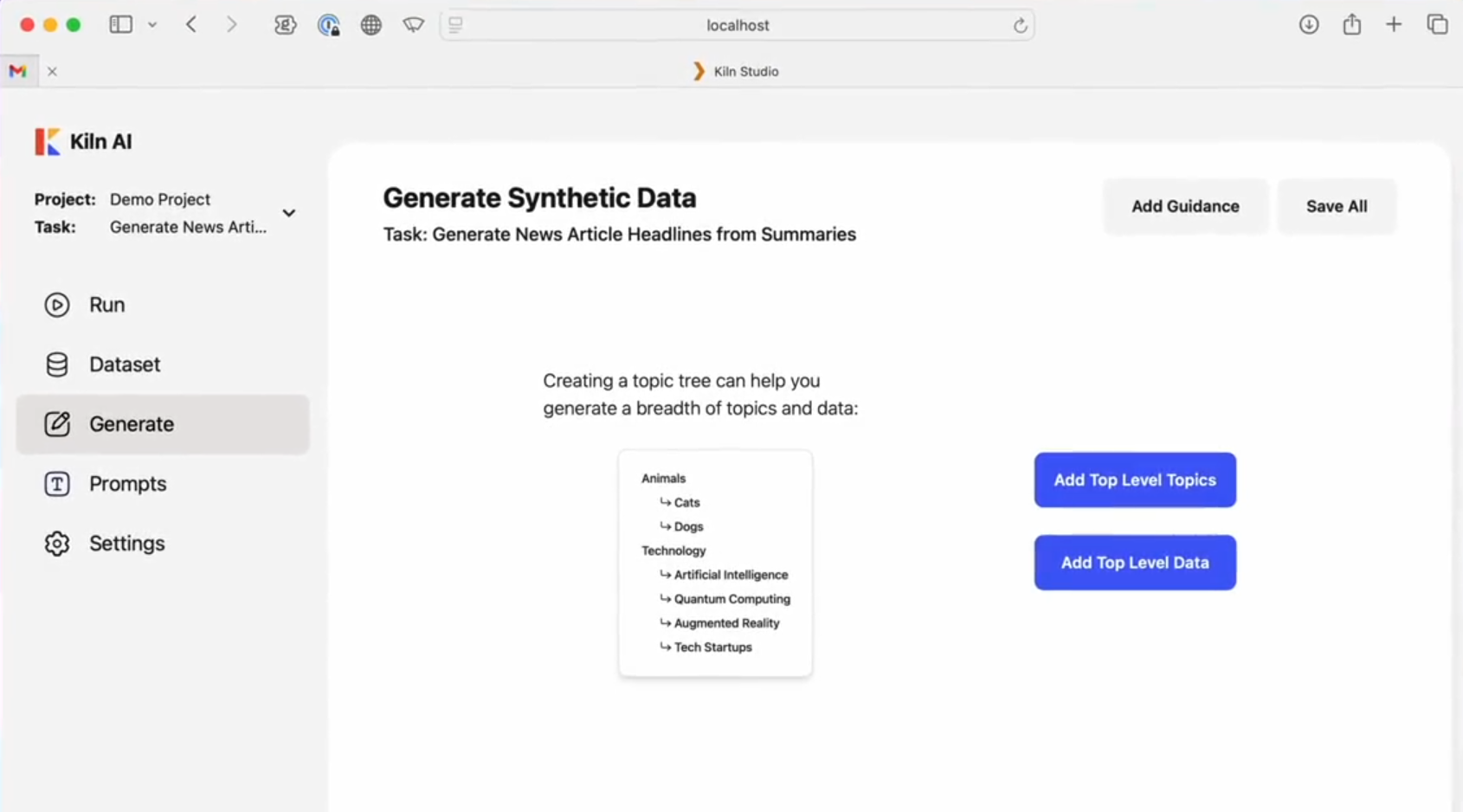

AnimeGamer is an open source tool from Tencent's ARC Lab. Users can generate anime videos with simple language commands, such as "Sousuke drive around in a purple car," and have different anime characters interact with each other, such as Kiki from Magical Girl's Adventure and Pazu from Castle in the Sky. It's based on the Multimodal Large Language Model (MLLM), which automatically creates coherent animated segments while updating the character's status, such as stamina or social values. The project code and model are free and open on GitHub for anime fans and developers to use for creation or experimentation.

Function List

- Generate animation video: Input language commands to automatically generate animation clips of character movements and scenes.

- Character Interaction Support: Let different anime characters meet and interact to create new stories.

- Updated character status: real-time record of changes in character values such as stamina, socialization and entertainment.

- Keep content coherent: ensure consistent video and status based on historical instructions.

- Open source extensions: complete code and models are provided and developers are free to adapt them.

Using Help

AnimeGamer requires a bit of programming basics, but the installation and usage steps are not difficult. Here are detailed instructions to help you get started quickly.

Installation process

- Preparing the environment

You'll need a Python-enabled computer, preferably with a GPU (at least 24GB of video memory). Install Git and Anaconda first, then type in the terminal:

git clone https://github.com/TencentARC/AnimeGamer.git

cd AnimeGamer

Create a virtual environment:

conda create -n animegamer python=3.10 -y

conda activate animegamer

- Installation of dependencies

Runs in a virtual environment:

pip install -r requirements.txt

This will install the necessary libraries such as PyTorch.

- Download model

Download the three model files to./checkpointsFolder:

- AnimeGamer model:Hugging FaceThe

- Mistral-7B model:Hugging FaceThe

- CogvideoX's 3D-VAE model: go to

checkpointsfolder, run:cd checkpoints wget https://cloud.tsinghua.edu.cn/f/fdba7608a49c463ba754/?dl=1 -O vae.zip unzip vae.zip

Make sure the models are all in the right place.

- test installation

Return to the home directory and run:

python inference_MLLM.py

No error means the installation was successful.

How to use the main features

At its core, AnimeGamer generates videos and character interactions using verbal commands. Here's how it works:

Generate anime videos

- move

- compiler

./game_democommand file in a folder such asinstructions.txtThe - Enter a command, e.g. "Sousuke is driving around in a purple car in the forest".

- Run MLLM to generate a representation:

python inference_MLLM.py --instruction "宗介在森林里开紫色车兜风"

- Decode to video:

python inference_Decoder.py

- The video will be saved in the

./outputsFolder.

- take note of

Instructions should be written with clear characters, actions and scenes so that the video is more in line with expectations.

Character Interaction

- move

- Enter an interactive command, such as, "Kiki teach Pazuzu to fly a broom."

- Run through the steps above to generate an interactive video.

- specificities

Supports mixing and interacting with different anime characters to create unique scenes.

Update Character Status

- move

- Add a state description to the command, e.g. "Sousuke is tired after running".

- (of a computer) run

inference_MLLM.pyThe status will be updated to./outputs/state.jsonThe

- draw attention to sth.

The status is automatically adjusted according to historical instructions to maintain consistency.

Customization and Technical Details

Want to change a feature? You can edit it directly ./game_demo AnimeGamer's technique is a three-step process:

- Processing the action representation with an encoder, the diffusion decoder generates the video.

- MLLM predicts the next state based on historical instructions.

- Optimize the decoder to improve video quality.

More details are in GitHub's README.md.

latest developments

- April 2, 2025: release of model weights and papers for The Witch's House and Goldfish Girl on the Cliff (arXiv).

- April 1, 2025: inference code released.

- Future plans: launch Gradio interactive demos and training code.

Frequently Asked Questions

- Slow generation? Verify that the GPU has enough memory (24GB), or update the drivers.

- Model download failed? Download manually from Hugging Face.

- Report an error? Check Python version (3.10 required) and dependencies.

With these steps, you'll be able to generate anime videos and character interactions with AnimeGamer.

application scenario

- anime and manga creation

Anime fans can use it to generate videos, such as having different characters interact and share them with friends. - Gaming Tests

Developers can use it to quickly prototype dynamic content and test ideas. - Learning Practice

Students can use it to learn multimodal technology and video generation for hands-on AI experience.

QA

- Programming knowledge required?

Yes, basic Python knowledge is required for installation and tuning, but simple commands will work. - What roles are supported?

Support for Magical Girl's Home Companion and Goldfish Hime on the Cliff now, with expansion in the future. - Is it commercially available?

Yes, but follow the Apache-2.0 protocol, see GitHub for details.

© Copyright notes

Article copyright AI Sharing Circle All, please do not reproduce without permission.

Related posts

No comments...