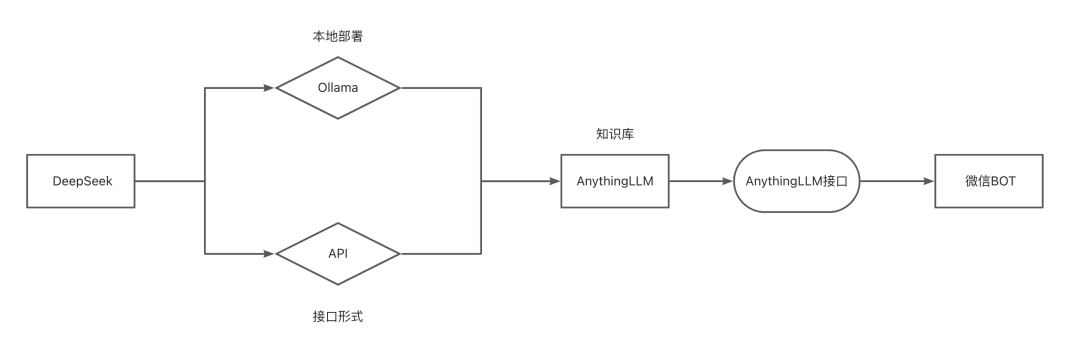

Realization of local/API knowledge base based on DeepSeek-R1 with access to WeChat BOT

In the previous article, "TheLocal Deployment DeepSeek-R1 and WeChat Bot Access TutorialsToday, I'd like to share with you a more interesting way to play: how to feed our AI assistant some dry stuff, so that it can turn into a professional consultant who really knows what it's doing!

Next I will take you by hand to complete the entire knowledge base building process, including environment configuration, knowledge base deployment, uploading information, and some details of the tuning tips. Finally, we will put this "full of knowledge" AI into the WeChat robot, to create a truly understand you, understand the industry's intelligent assistant!

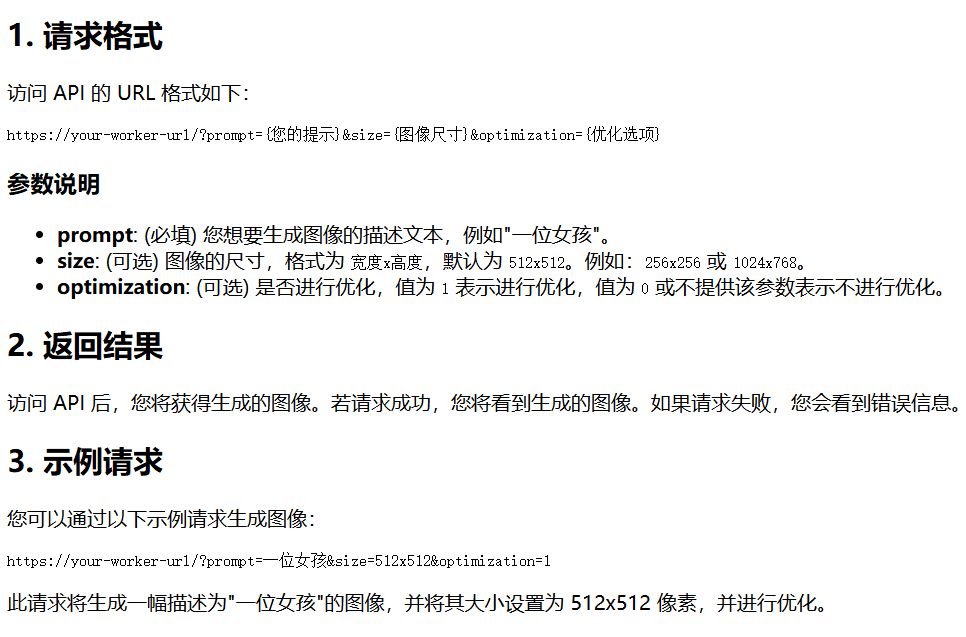

First of all, let's see the result, this is the result after I uploaded a "human body injury degree of disability classification" information inquiry:

DeepSeek-R1 deployment

DeepSeek There are two ways of deployment, one is local deployment and the other is accessing third-party APIs.

Pros and cons of both:

- local deployment

✅ Advantages: fully private data, low long-term cost, fast response time

❌ Cons: eats local arithmetic, requires a more configurable machine - API call

✅ Benefits: fast access, maintenance-free, resilient scaling

❌ Disadvantages: data needs to be transmitted to a third party, long-term costs are uncontrollable

Local DeepSeek

First you need to make sure that you have installed ollama and ollama is functioning properly.

DeepSeekAPI

I was actually going to write this post only about the local deployment approach .....

However, since the web version of DeepSeek has been "on strike" lately, I was forced to try the local version of ChatBox I didn't expect to experience a full-blooded version of R1, and it was a blast! I think this program also smells good, so I'll write in the API way by the way.

Here I provide two platforms for you to access of your choice.

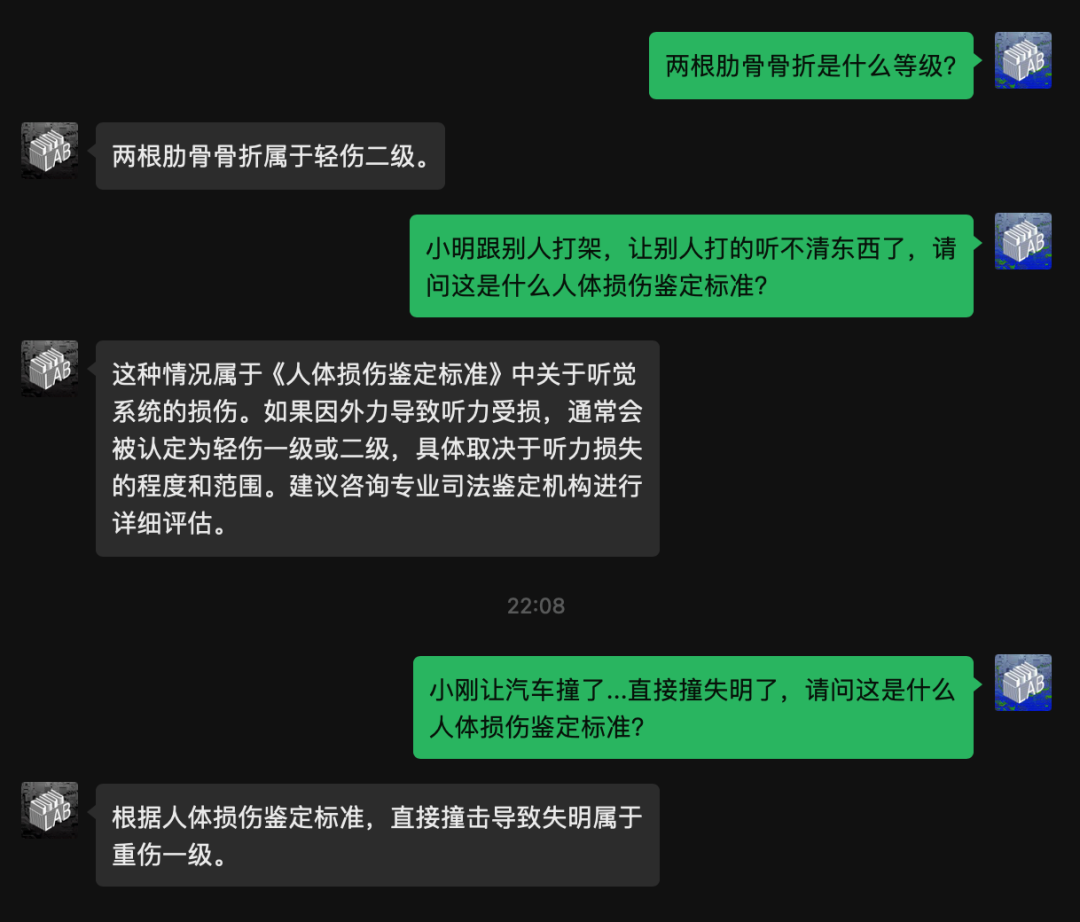

First we need to get the APIkey, and subsequently we need to populate the knowledge base configuration with the APIkey.

volcanic ark

official website

https://console.volcengine.com/ark

prices

Input: ¥2/M Tokens

Output: ¥8/M Tokens

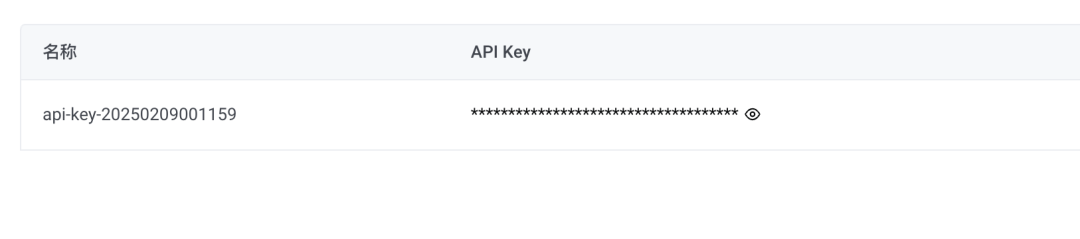

entryAPIKey管理CreateAPIkey

New secret key, copy APIkey

Silicon-based flow

Silicon-based flow official website

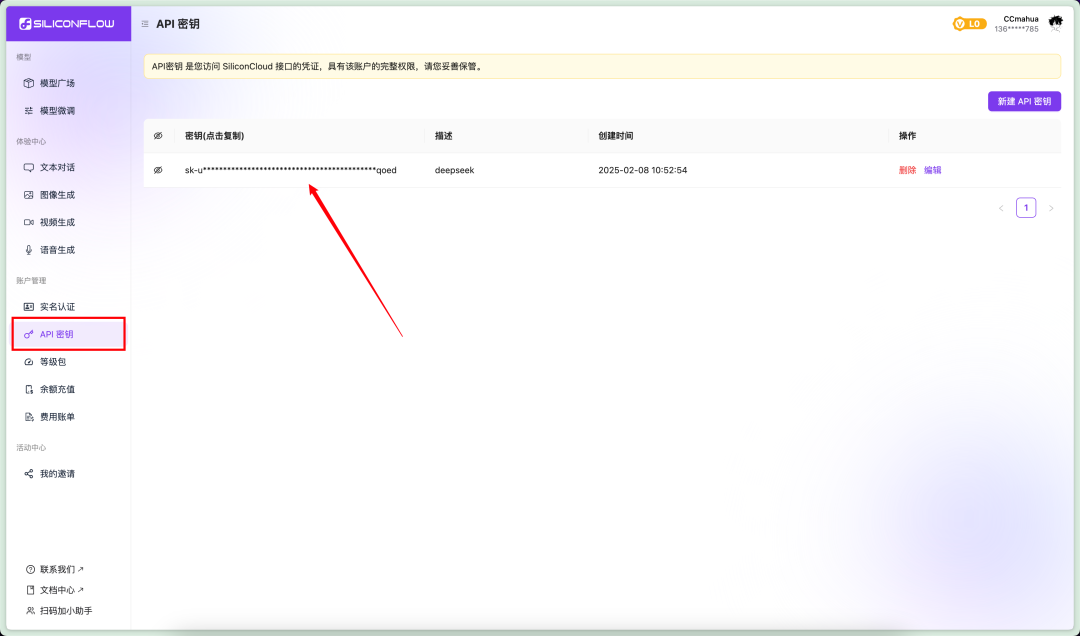

https://cloud.siliconflow.cn/account/ak

prices

Input: ¥4/M Tokens

Output: ¥16/M Tokens

Go to the left side of theAPI秘钥Click新建秘钥post-copying secret key

repository

Knowledge base installation

Next we need to access the knowledge base, here we use theAnythingLLMThe program.

AnythingLLM is an open source Large Language Model (LLM) application framework designed to help users easily build and deploy intelligent Q&A, document analysis, and other applications based on private data.

Go to the AnythingLLM website and download the installer.

https://anythingllm.com/

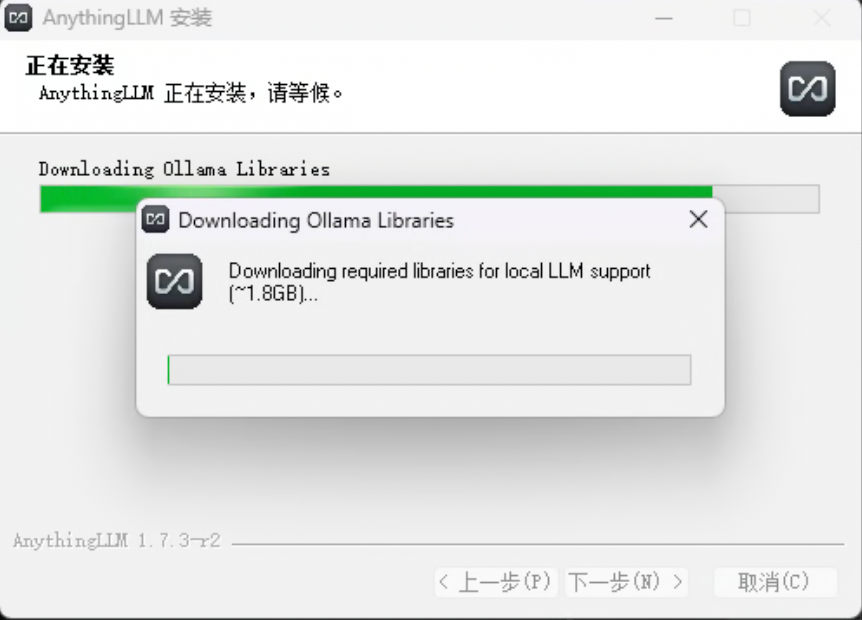

Run the installer, in between it will download additional dependencies required (GPU, NPG support)

If the download fails, or if you close this download window and skip this step, AnythingLLM will still install successfully.

Don't worry at this time, you can do the installation manually.

The web link has alibZip.

Extract the zip archive and place the extracted folder into the你的安装目录AnythingLLMresourcesollamaInside the catalog.

Knowledge base configuration

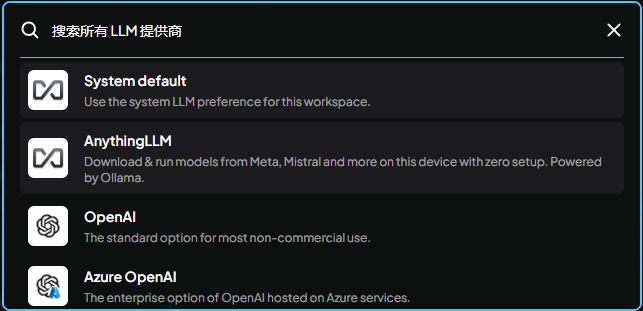

After the installation is complete, enter the AnythingLLM interface, where you need to select a default large model.

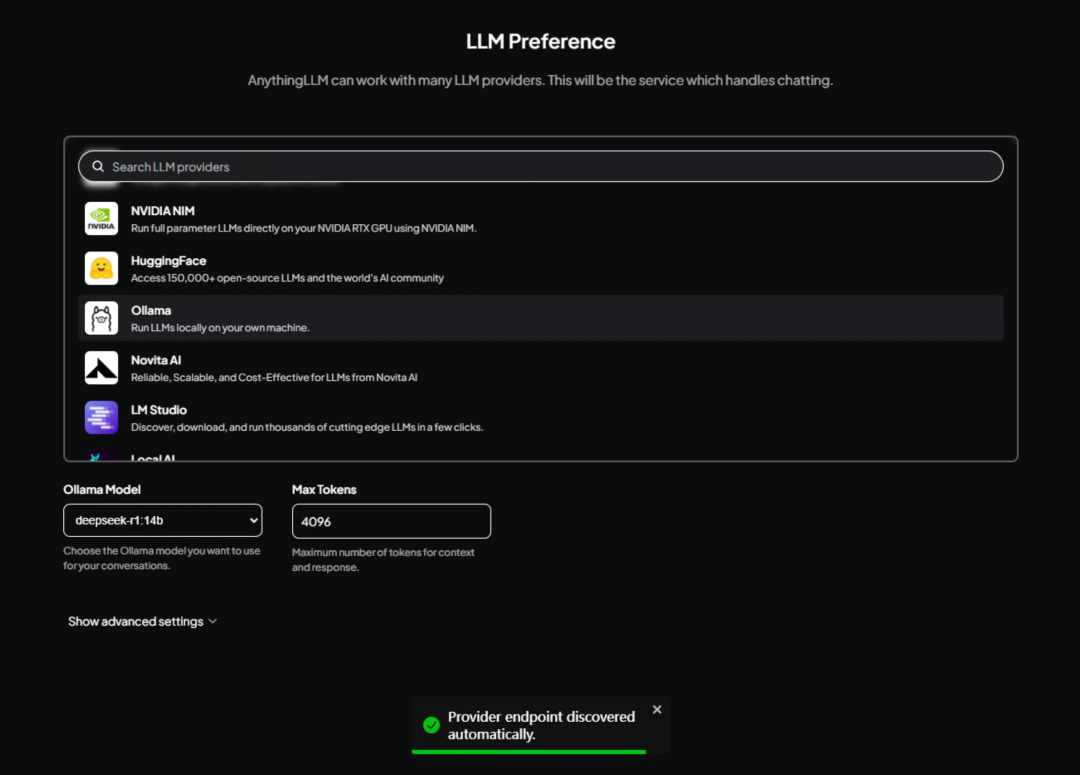

local configuration

If you're using a local deployment of DeepSeek, scroll down to find theOllamaJust choose.

API Configuration

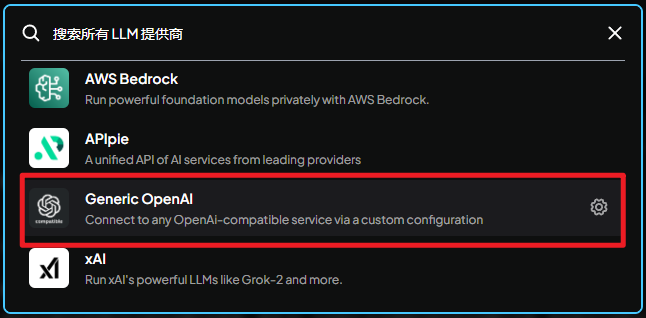

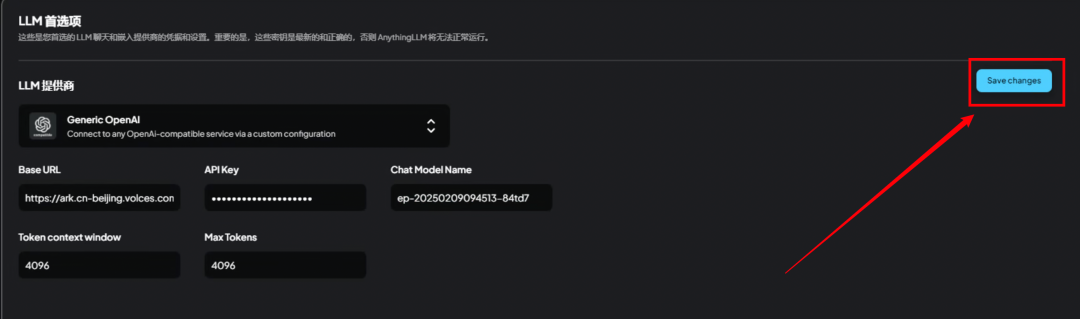

If you are using a third-party API, this step does not need to be selectedollamaFind it down here.Generic OpenAI

Generic OpenAI:

Connect to any service compatible with the OpenAI interface format through a customized configuration.

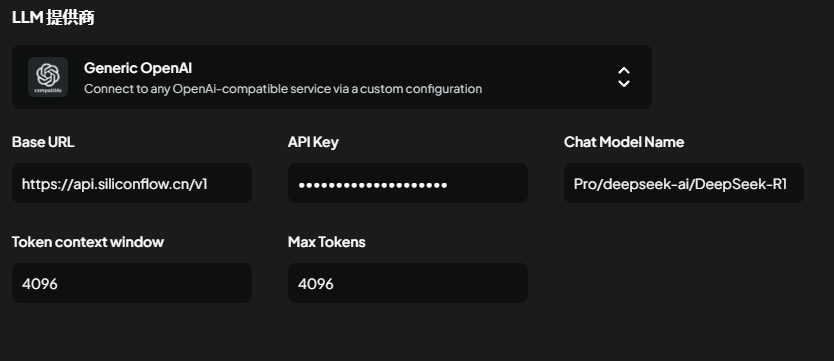

Here you need to fill in 5 parameters

Use the silicone-based flow API:

BaseURLrequest address

https://api.siliconflow.cn/v1

APIkeyAPI secret key, fill in the one you just got.

ChatModelNameModel name

Pro/deepseek-ai/DeepSeek-R1

Token context window(context window)

4096

Max Tokens(Maximum number of tokens)

4096 or 8192 depending on your situation.

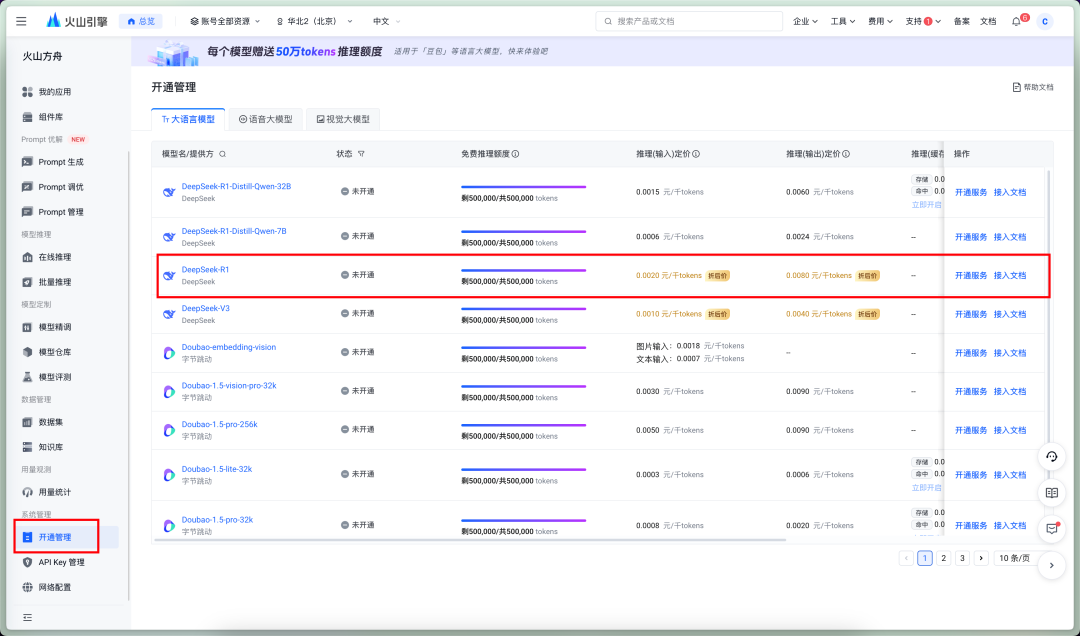

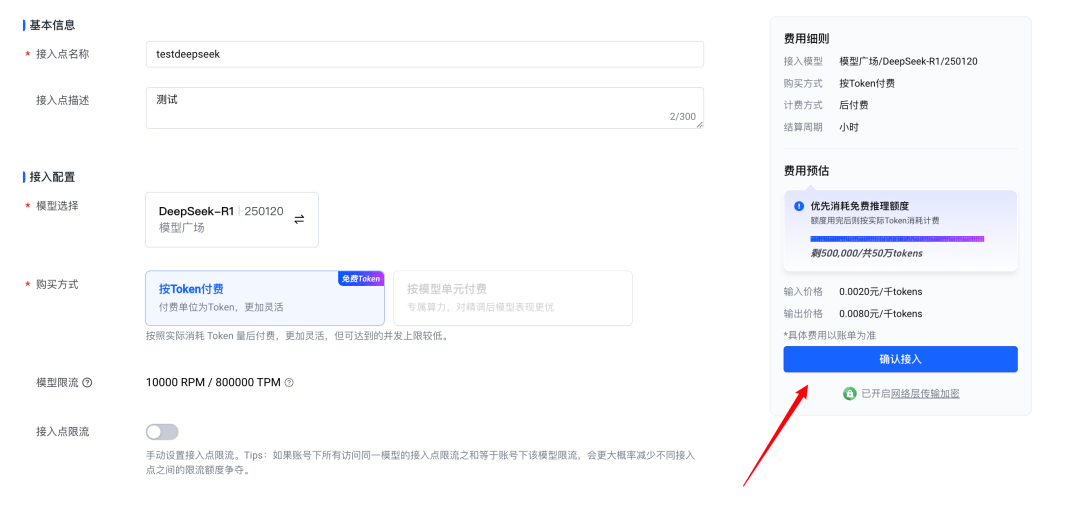

Use the Volcano Ark API:

BaseURLrequest address

https://ark.cn-beijing.volces.com/api/v3

APIkeyAPI secret key, fill in the one you just got.

ChatModelNameModel name

Here is special, Volcano Ark model name needs to be filled with access point ID. we need to go to the platform to create the access point.

Start by turning on DeepSeekR1 service first

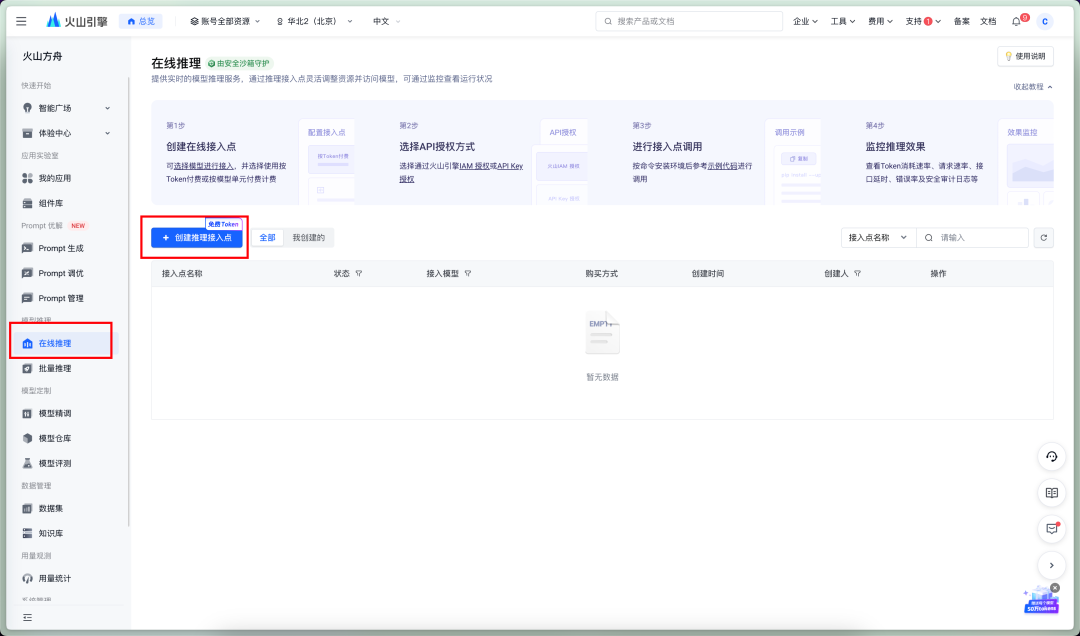

Coming to Online Reasoning - Creating Access Points

Confirmation of access

This ID is the access point ID, just fill in this value.

Token context window(context window)

4096

Max Tokens(Maximum number of tokens)

4096 or 8192 depending on your situation.

Once the above settings are complete, don't forget to tapSaveChanageSave.

Workspace creation

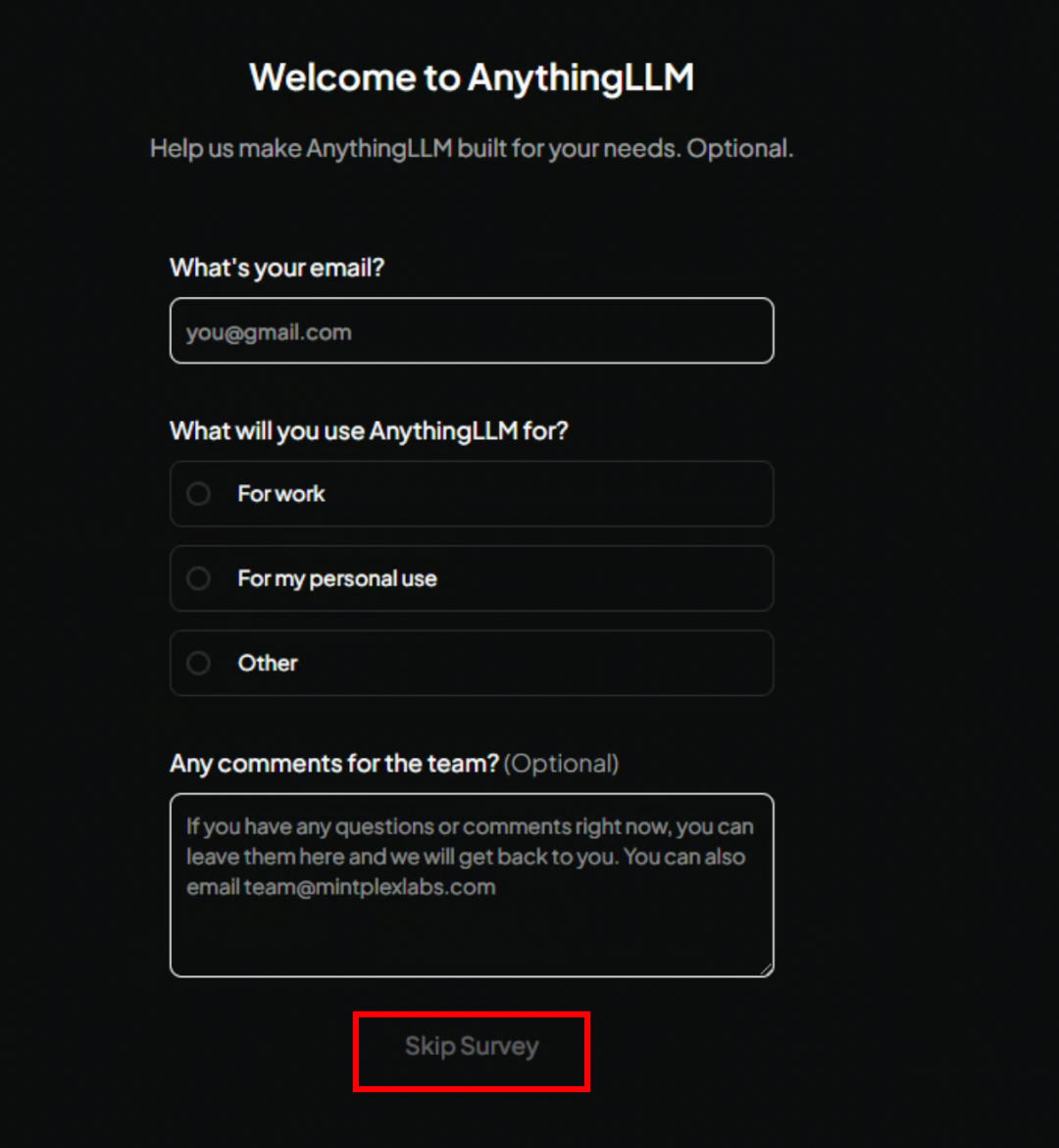

The next step into this privacy interface, is to tell you how to save data, how to use those instructions, anyway, are all platitudes, directly skip the next step can be

This step is the welcome screen, nothing special, just skip it!

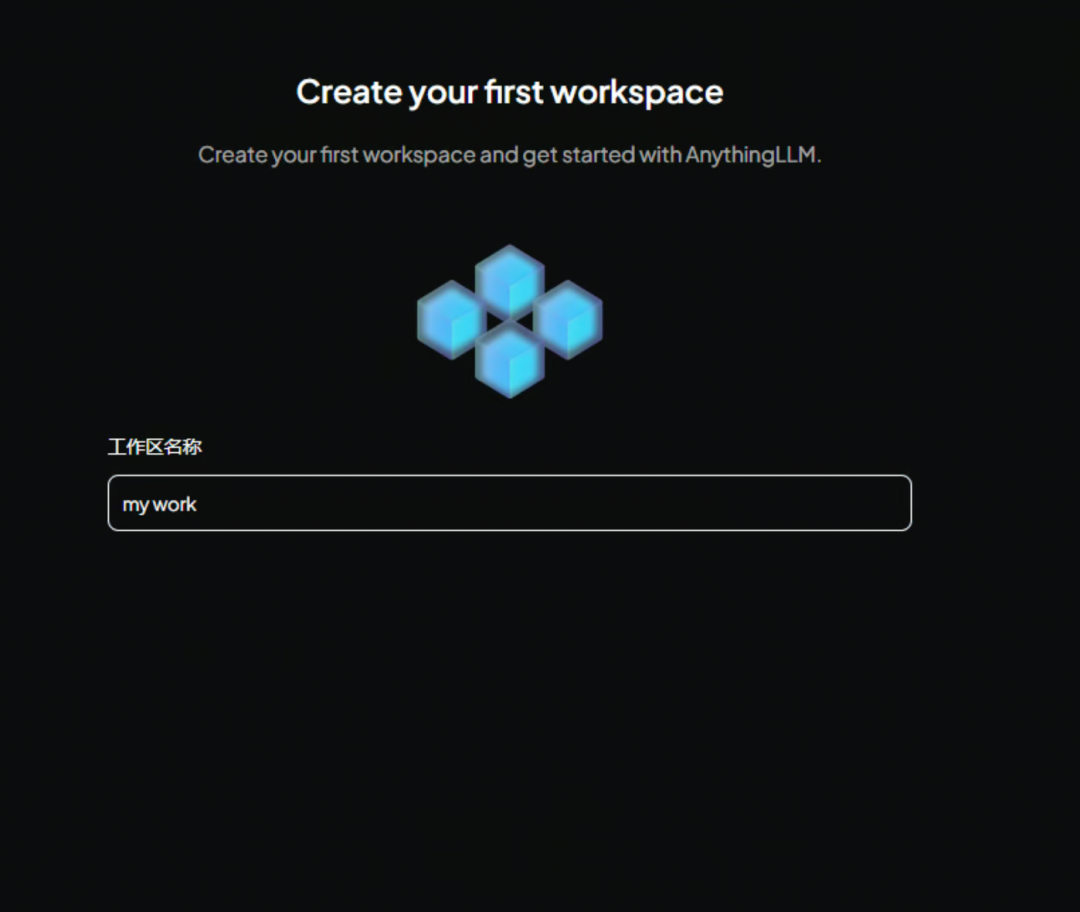

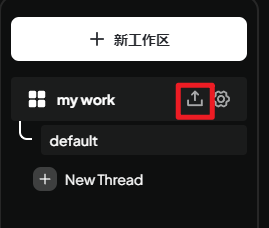

Create a name for the workspace. It's recommended that you use English, because it will come in handy if you want to use the API to call it later.

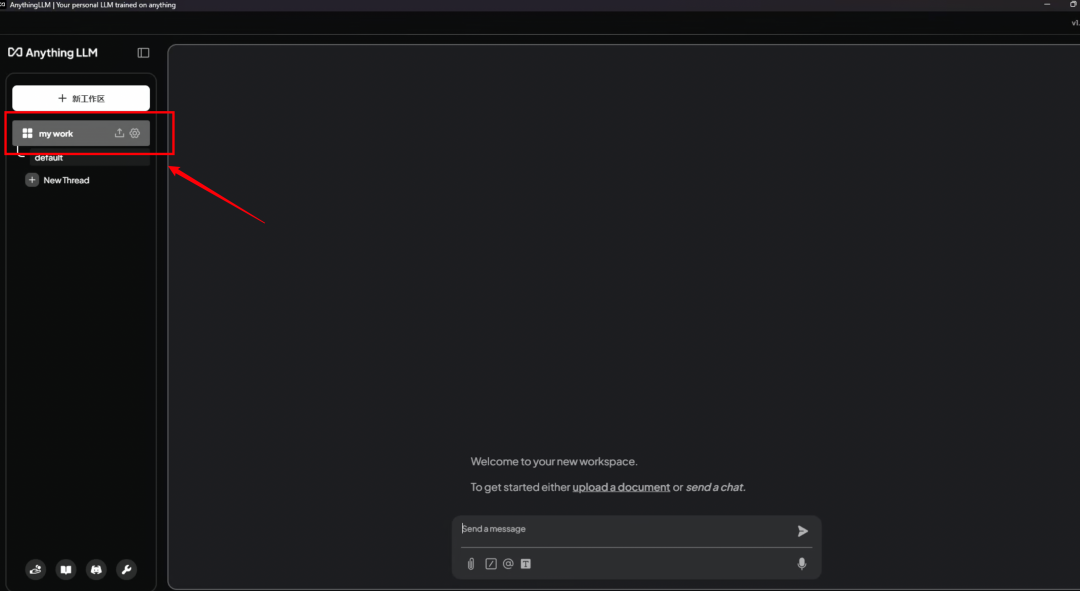

Now enter AnythingLLM The main interface of the game is now up. It will teach you how to play with a sample dialog

Click on the workspace we just named on the left to enter a new dialog.

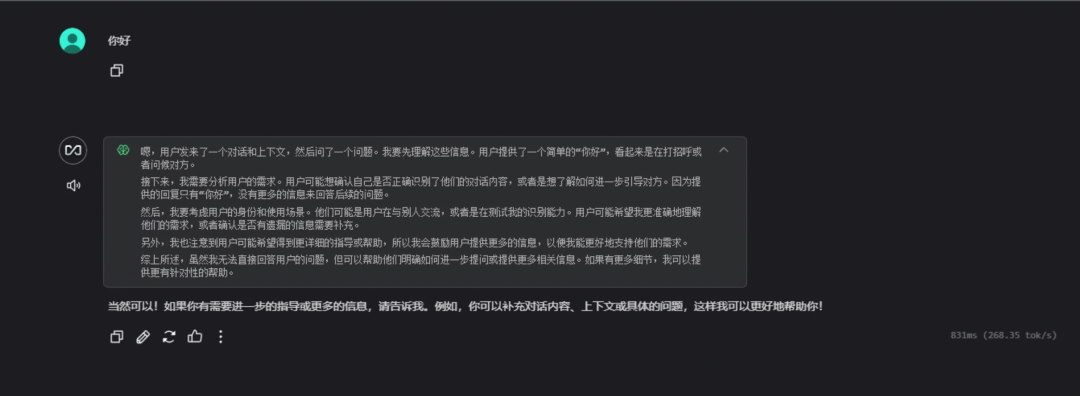

Here's a simple dialog to test if the model call was successful.

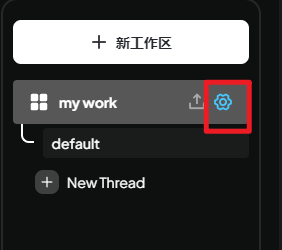

Workspace Setup

Click the Setup button on the right side of the workspace to enter the Setup interface.

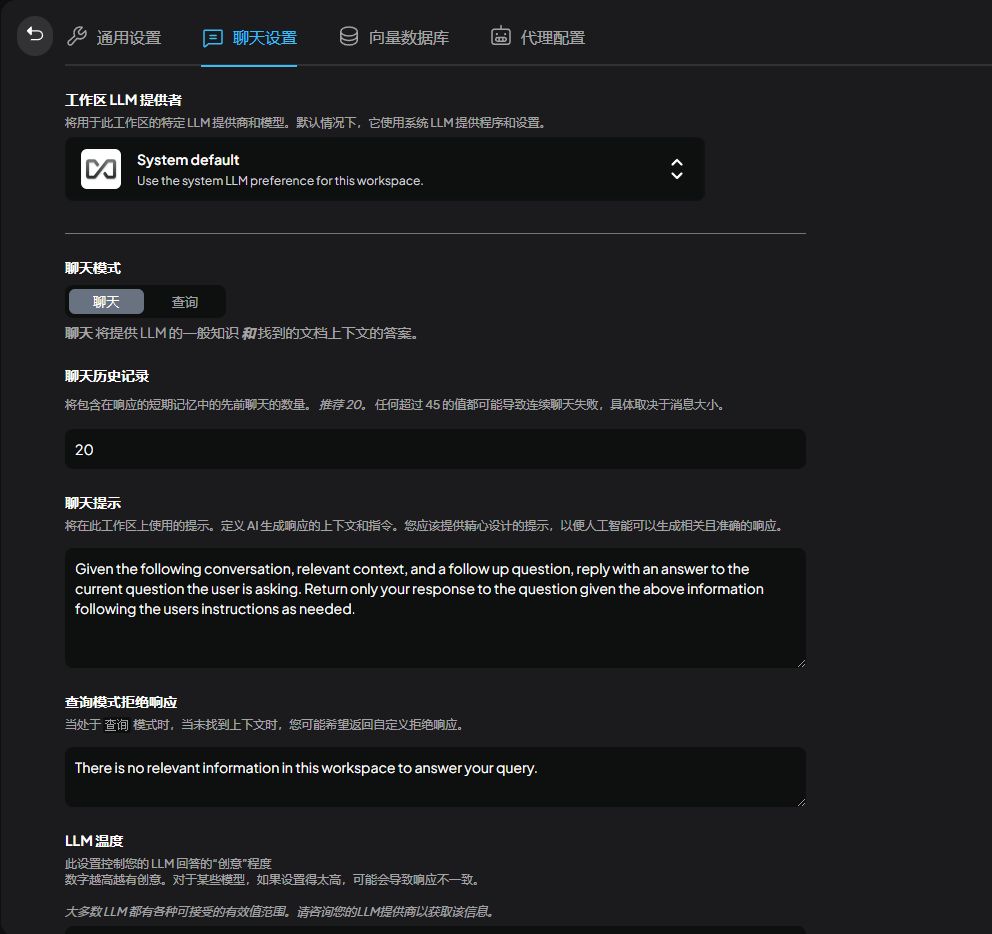

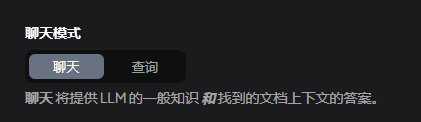

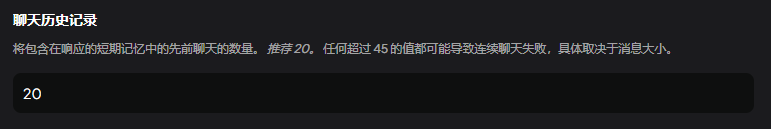

Go to the chat settings, where we can tweak the model in detail

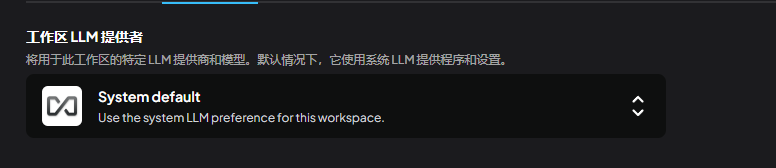

Here is where you adjust the large model currently in use. The default is to use the one set by the system, which was set earlierollama

You can also choose other local big models or API services.

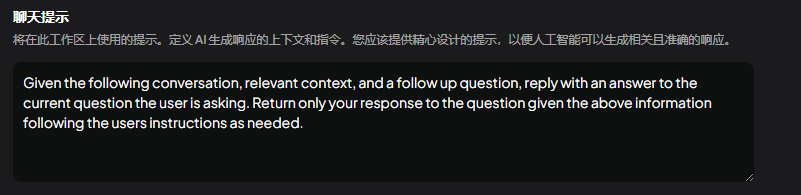

The mode is divided into chat and query, query means only use the query context function (you need to have your own uploaded documents), chat means use the chat function + query

Number of contexts

You can understand that it is a persona prompt word, and you can modify it to Chinese or keep it as default.

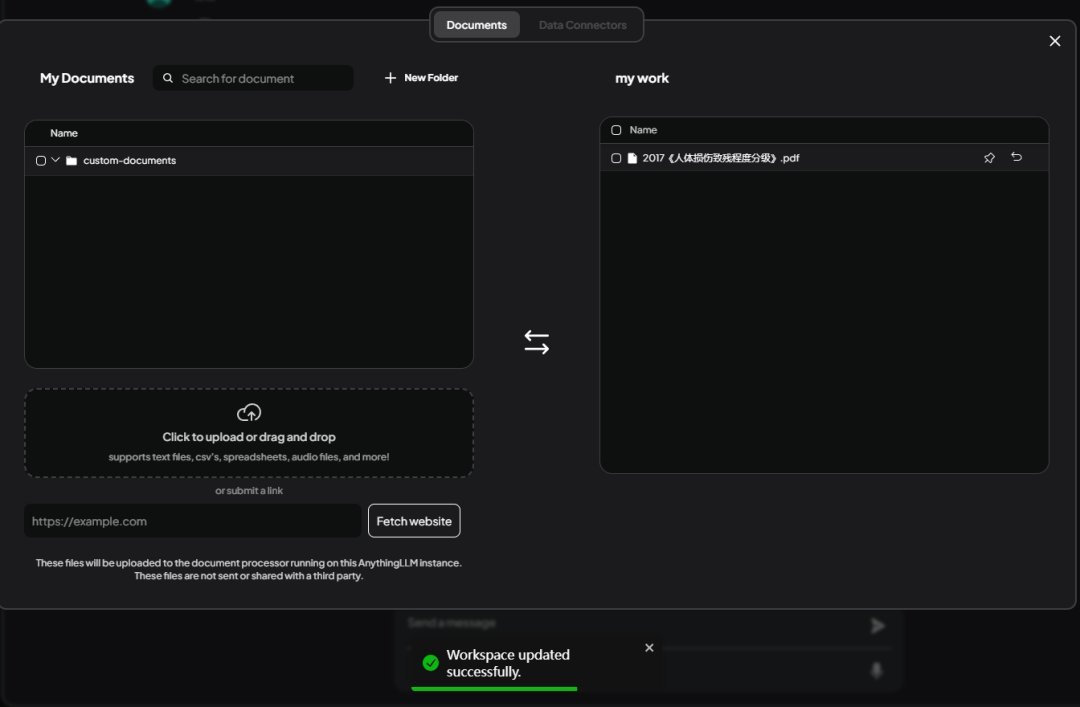

Feeding information

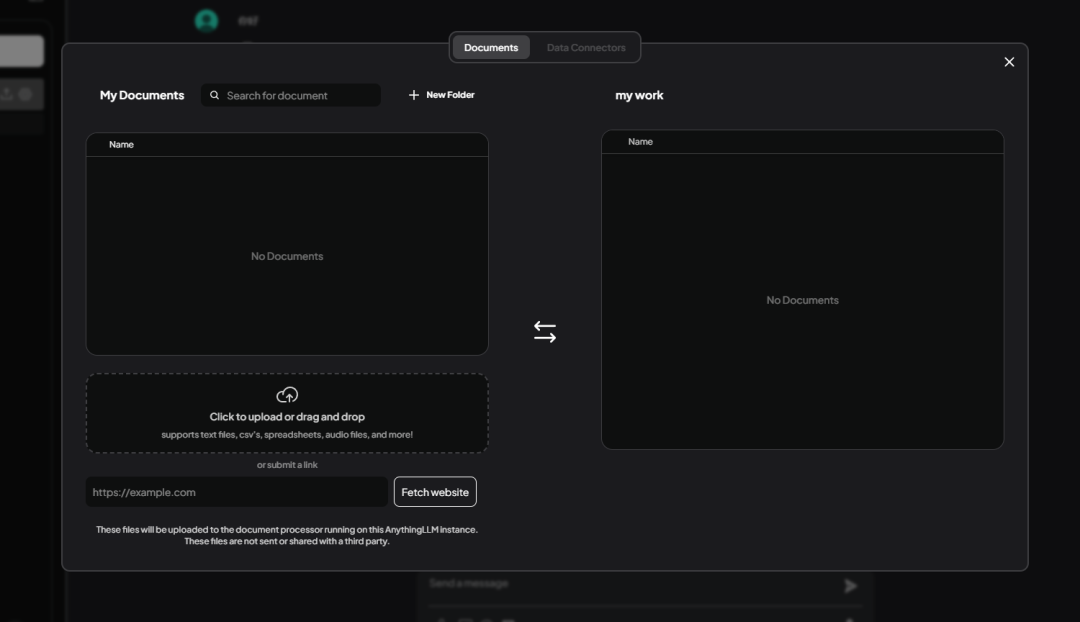

Next, you need to feed the knowledge base, click on the workspace next to the上传按钮

Access to the Knowledge Base Management interface

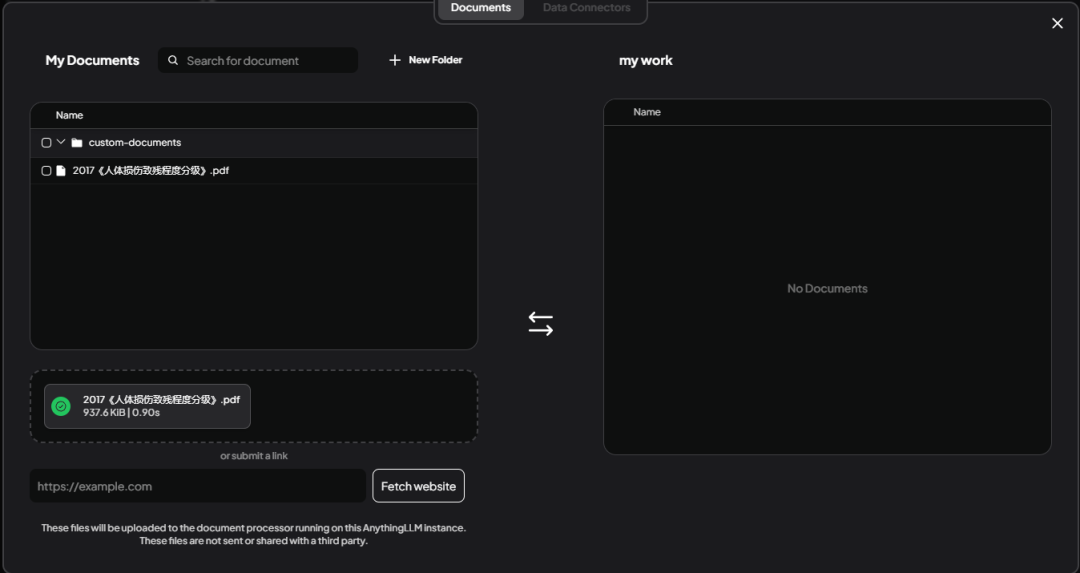

Supports batch uploading, supports Excel, world, txt and other file formats. Here I uploaded a《人体损伤致残程度分级》.pdfThe

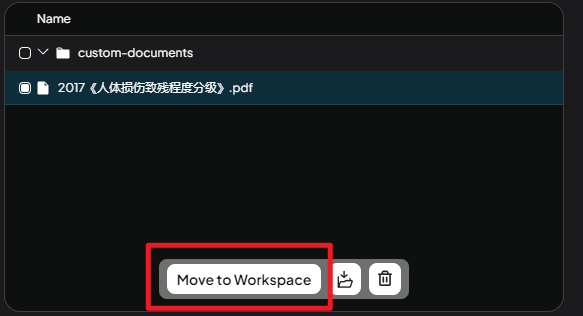

Select the file and clickMove to WorkSpaceThis step adds files from the temporary area to the workspace.

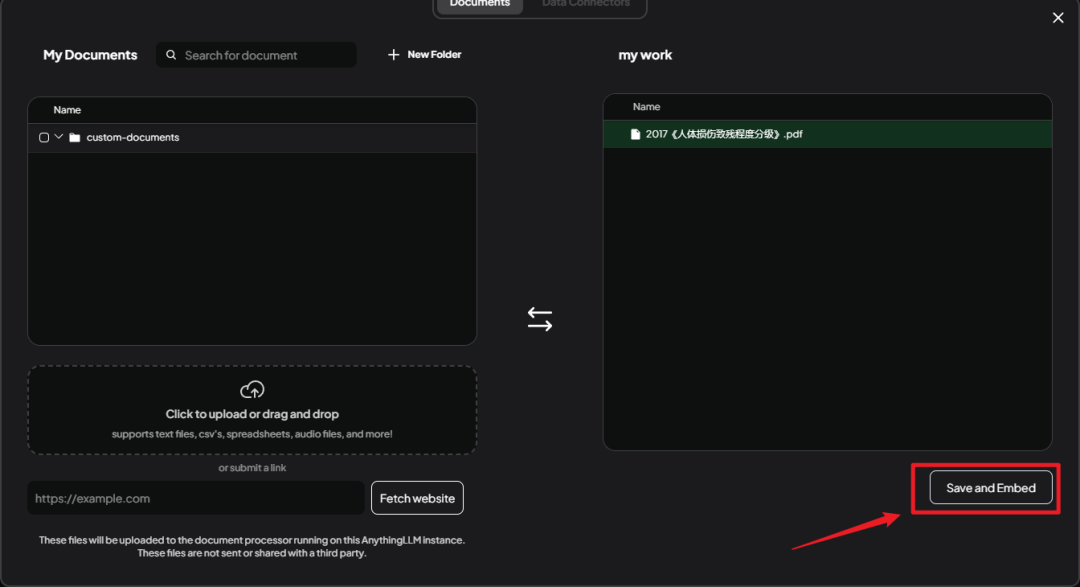

You can see the uploaded files transferred to the right, here click on theSace and EmbedMake a save.

Tips belowWorkSpace updated successfullyIndicates a successful save.

Here you need to clickPin to workspaceThis button.

Pin to workspace::

If, when using AnythingLLM, you find that you are not getting satisfactory answers by default, you can improve the quality of your answers by pinning documents. This is a quick way to improve the accuracy and relevance of your answers.

Simply put, document pinning is the process of "feeding" the content of a document directly to the language model so that it can refer to that content when answering a question and provide a better answer.

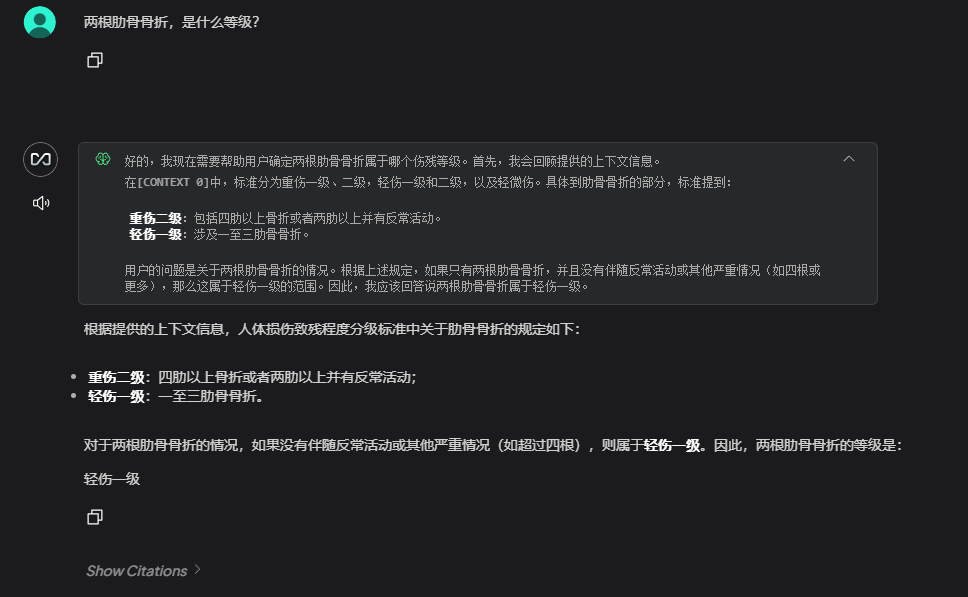

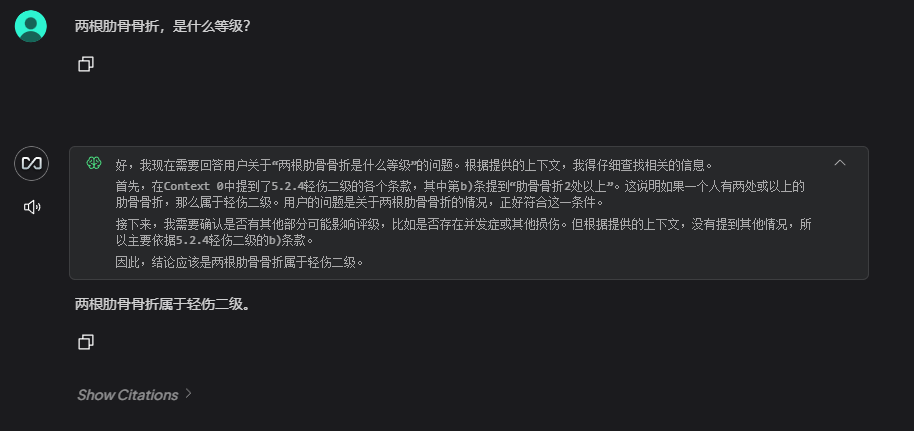

Then we go back to the Conversations page, create a new conversation, ask a question, and verify that the knowledge base was added successfully.

error resolution

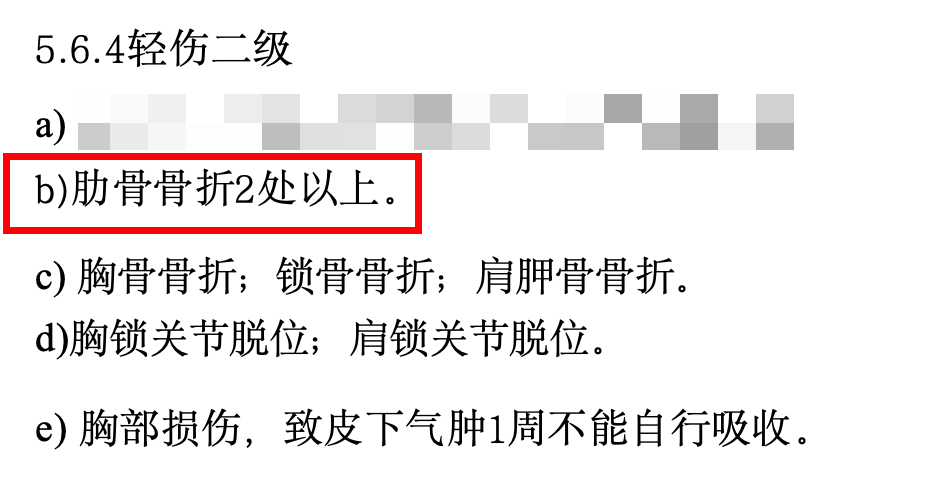

You can see that DeepSeek has answered the question in conjunction with the uploaded information, but there are errors in the answer.

For example, the first question:

Ask:

What is the grade for two broken ribs?

Answer:

Based on the contextual information provided, the Human Injury Disability Classification Standards for rib fractures are as follows:

Serious Injury Grade 2: Fracture of more than four ribs or more than two ribs with paradoxical movement;

Minor injuries of the first degree: fractures of the first to third ribs.

In the case of a two rib fracture that is not accompanied by paradoxical activity or other serious conditions (e.g., more than four), it is classified as a minor first-degree injury. Thus, two rib fractures are graded:

minor injuries (first degree)

However, this is in the uploaded file, two broken ribs are classified as a minor second degree injury. This is a rather serious error for an application in legal advice.

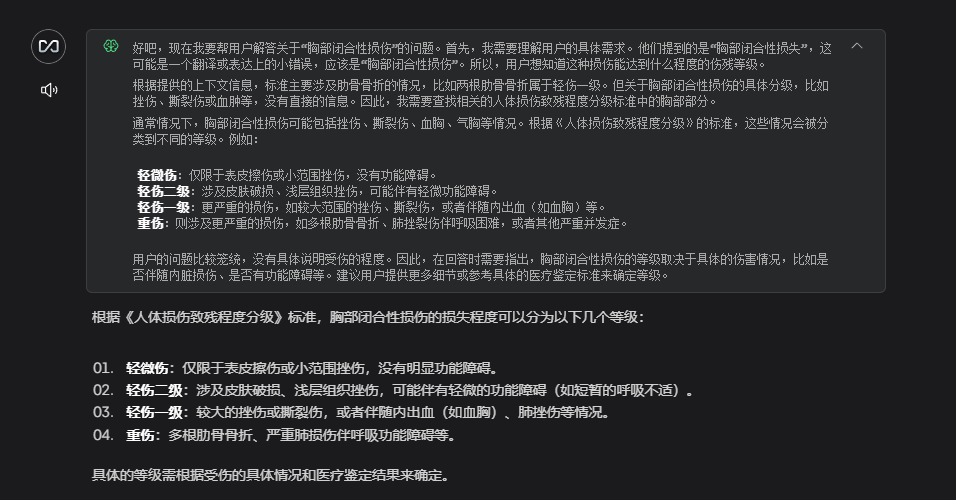

There is no need to rush when it comes to this kind of inability to answer questions, let's troubleshoot the cause.

I took a closer look at the uploaded PDF file and realized that it contained a lot of tables, special formatting, and a whole lot of unused content. These "impurities" affect the AI's ability to understand what we really want it to learn.

All this cluttered formatting can prevent the AI from getting to the point, like when we see a messy book, so I reorganized the key content into a concise Word document, deleted the unused content, and then re-fed it to the AI.

An excerpt from the contents of the organized document:

5.6.4轻伤二级

b)肋骨骨折2处以上。

c) 胸骨骨折;锁骨骨折;肩胛骨骨折。

d)胸锁关节脱位;肩锁关节脱位。

e) 胸部损伤,致皮下气肿1周不能自行吸收。

f) 胸腔积血;胸腔积气。

g)胸壁穿透创。

h)胸部挤压出现窒息征象。

5.6.5轻微伤

a)肋骨骨折;肋软骨骨折。

5.7 腹部损伤

5.7. 1重伤一级

a)肝功能损害(重度)。

b)胃肠道损伤致消化吸收功能严重障碍,依赖肠外营养。

c) 肾功能不全(尿毒症期)。

Tried the question again and this time the answer was much more accurate!

This is just the simplest solution. If you want AI to answer more accurately, you can also try adjusting the document format, optimizing the search method, adjusting the AI parameters, and so on. But we'll talk about these advanced plays later.

Access to WeChat BOT

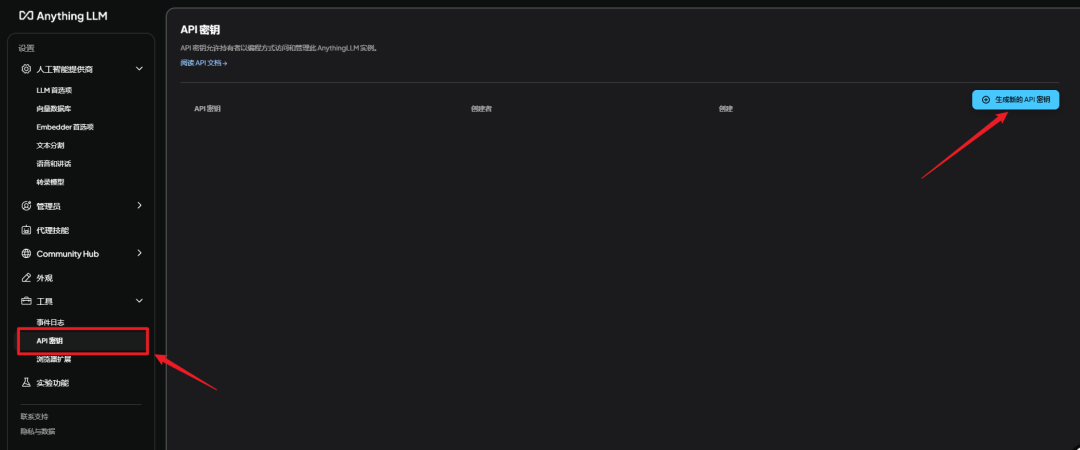

Get AnythingLLM secret key

show (a ticket)AnythingLLMinterface, go to Settings - API Secret Key - Generate New API Secret Key

Using the Integration Pack

I've putAnythingLLMinterface toNGCBOTin and made into an integrated package.

Unzip the netbook link in theNGCBOT_LLM.zipzip file, double-click on the启动器.exeReady to run.

There are three things you need to do before using it!

There are three things you need to do before using it!

There are three things you need to do before using it!

First, make sure that your integration package directory does not have Chinese, there is Chinese must report errors!

correct demonstration

F:AIAIpackageNGCBot

false demonstration

F:微信BOTAIpackageNGCBot

Second, install the specified version of WeChat.

There is a microsoft version in the web link

WeChatSetup-3.9.10.27.exe

Install this version, the computer originally had microsoft do not need to delete, directly override the installation.

Third, modify the NGCBOT configuration file.

require a thorough understanding ofConfigConfig.yamlThe configuration file can be modified.

There are three changes to the configuration file

1. Modify the super administrator configuration.

Here fill in the ID of the micro-signal you set up, remember to always change it! Otherwise it's all sent to me ....

How do I get this ID? Use the microsignal you want to set as administrator, send a message to the bot, then you can see the id of the person who sent the message in the console.

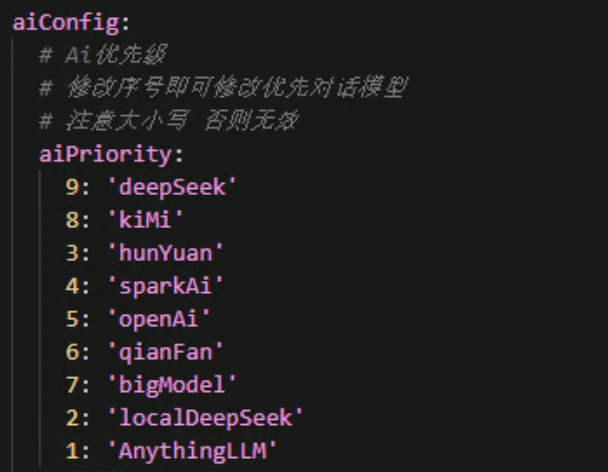

2. Modify the ai priority configuration.

Here is the order in which the ai interfaces are executed, the smaller the number in front means the execution is prioritized. The default setting is AnythingLLM priority.

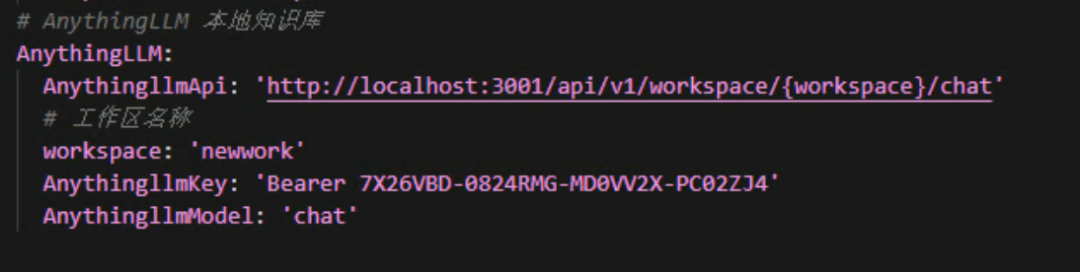

3. AnythingLLM configuration.

Here you need to change theworkspace,AnythingLLMkeyThese two values are just fine.

workspaceFill in the name of the workspace you AnythingLLM created, it's the lowercase one.

AnythingLLMkeyFill in the secret key you get in AnythingLLM. Not the secret key from Silicon Flow or the one from Volcano Ark!

Remember to leave the Bearer in front of it.

After following the above steps to make changes, double-click the启动器.exeThen you can have fun!

Integration pack acquisition is at the end of the article.

Congratulations! Anyone who sees this is 👍🏻👍🏻

If you just want to get started playing with knowledge base bots quickly, see here.

Here's what's written for the little ones who want to delve deeper into it

- How to Call AnythingLLM Interface

- How to Extend NGCBOT Functionality

Read on if you're interested

development and expansion

interface call

This step is suitable for those who want to expand their development and research, if you are a beginner and want to play the integration pack directly, please skip this step.

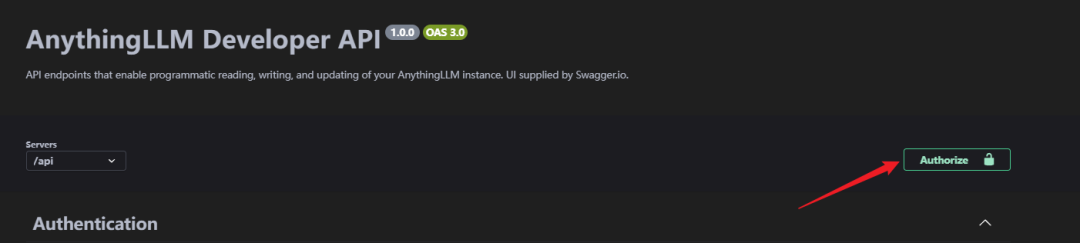

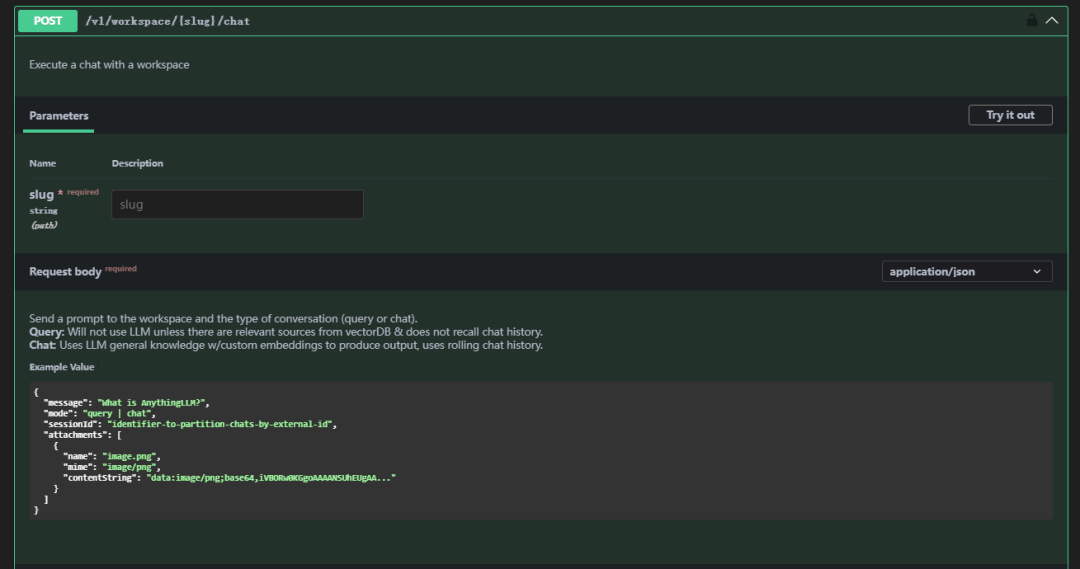

OK, let's get a little more advanced. Browser inputs[http://localhost:3001/api/docs/](http://localhost:3001/api/docs/)Go to the API page.

Here, since the original official API documentation cannot be accessed on the web, it has been changed to local access to the API documentation.

After entering the API description page, you can see that there are various interfaces here. And it supports debugging directly on this page.

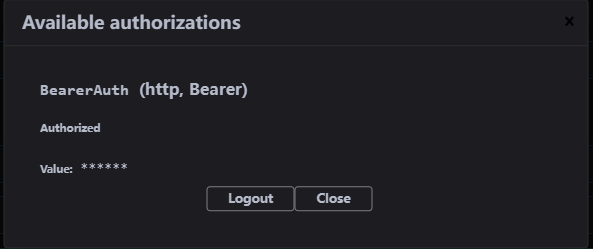

Before we can start accessing it, we need to authorize the secret key by clicking on theAuthorizeThe

Paste AnythingLLMAPI secret key into the open interface, the authorization is successful!

Then go down and find/v1/workspace/{slug}/chatThis API

Parameter description

| parameters | clarification | note |

|---|---|---|

| slug | workspace | Reject Chinese characters, uppercase and other special characters, lowercase only |

| message | Message | The message you want to send |

| mode | paradigm | The schema is divided into chat and query, query means only using the query context function (you need to have your own uploaded documents), chat means using the chat function |

Example of a request (Request body)

curl --location 'http://localhost:3001/api/v1/workspace/newwork/chat'

--header 'Authorization: Bearer 7X26VBD-0824RMG-MD0VV2X-PC02ZJ4'

--header 'Content-Type: application/json'

--data '{

"message": "两根肋骨骨折是什么等级?",

"mode": "chat"

}'

Responses

{

"id": "488ff13e-0206-4014-bf50-ea2d8e78c1c3",

"type": "textResponse",

"close": true,

"error": null,

"chatId": 17,

"textResponse": "<think>n好的,我现在需要解决用户的问题:“两根肋骨骨折是什么等级?” 用户之前已经询问过这个问题,并且我给出了一个回答,但现在他再次提问,可能希望得到更详细或不同的解释。nn首先,回顾一下上下文。在提供的Context 0中,5.6.4轻伤二级部分提到了b)肋骨骨折2处以上属于轻伤二级。因此,两根肋骨骨折符合这一标准,应定级为轻伤二级。nn用户可能是一名法律工作者、家属或受伤者本人,想要了解骨折的严重程度以便处理工伤赔偿或其他相关事宜。他们可能对分级标准不太熟悉,所以才会多次询问。nn在思考过程中,我需要确认是否还有其他相关的评定标准会影响结果。例如,是否有特殊情况如骨折的位置、是否伴随内脏损伤等,这些因素可能会改变评定等级。但根据提供的Context,没有提到这些情况,因此只能基于给出的信息进行判断。nn此外,用户可能希望知道如何申请工伤认定或后续的赔偿流程,但由于信息有限,我无法提供具体步骤,但可以建议他们联系劳动保障部门或医疗机构获取专业指导。nn总结来说,两根肋骨骨折属于轻伤二级,这是根据提供的标准得出的结论。用户可能需要进一步的法律或医疗咨询来处理相关事宜。n</think>nn根据您提供的上下文信息:nn在 **5.6.4 轻伤二级** 中提到:n- **b) 肋骨骨折2处以上**。nn因此,**两根肋骨骨折属于轻伤二级**。",

"sources": [

{

"text": "5.2.4轻伤二级nna)面部单个创口或者瘢痕长度4.5cm 以上;多个创口或者瘢痕长度累计6.0cm 以上。nnb)面颊穿透创,皮肤创口或者瘢痕长度1.0cm以上。nnc)口唇全层裂创,皮肤创口或者瘢痕长度1.0cm 以上。nnd) 面部块状瘢痕,单块面积3.0cm²以上或多块面积累计5.0cm²以上。nne) 面部片状细小瘢痕或者色素异常,面积累计8.0cm²以上。f)眶壁骨折(单纯眶内壁骨折除外)。nng)眼睑缺损。nnh) 一侧眼睑轻度外翻。nni) 一侧上眼睑下垂覆盖瞳孔。nnj) 一侧眼睑闭合不全。nnk)一侧泪器损伤伴溢泪。nn1)耳廓创口或者瘢痕长度累计6.0cm以上。nnm)耳廓离断、缺损或者挛缩畸形累计相当于一侧耳廓面积15%以上。n)鼻尖或者一侧鼻翼缺损。nno) 鼻骨粉碎性骨折;双侧鼻骨骨折;鼻骨骨折合并上颌骨额突骨折;鼻骨骨折合并鼻中隔骨折;双侧上颌骨额突骨折。nnp) 舌缺损。nnq) 牙齿脱落或者牙折2枚以上。nnr) 腮腺、颌下腺或者舌下腺实质性损伤。nns) 损伤致张口困难I 度 。nnt)颌骨骨折(牙槽突骨折及一侧上颌骨额突骨折除外)。u)颧骨骨折。nn5.2.5轻微伤nnnnnnnnnna)面部软组织创。nnb)面部损伤留有瘢痕或者色素改变。nnc) 面部皮肤擦伤,面积2.0cm²以上;面部软组织挫伤;面部划伤4.0cm以上。nnd)眶内壁骨折。nne)眼部挫伤;眼部外伤后影响外观。nnf) 耳廓创。nng) 鼻骨骨折;鼻出血。h)上颌骨额突骨折。nni) 口腔粘膜破损;舌损伤。nnj) 牙齿脱落或者缺损;牙槽突骨折;牙齿松动2枚以上或者Ⅲ度松动1枚以上。nn5.3 听器听力损伤nn5.3. 1重伤一级nna) 双耳听力障碍(≥91dB HL)。nn5.3.2重伤二级nna) 一耳听力障碍(≥91dB HL)。nnb) 一耳听力障碍(≥81dB HL),另一耳听力障碍(≥41dB HL)。nnc) 一耳听力障碍(≥81dB HL),伴同侧前庭平衡功能障碍。nnd) 双耳听力障碍(≥61dB HL)。nne) 双侧前庭平衡功能丧失,睁眼行走困难,不能并足站立。nn5.3.3轻伤一级nna)双耳听力障碍(≥41dB HL)。nnb)双耳外耳道闭锁。nn5.3.4轻伤二级nna) 外伤性鼓膜穿孔6周不能自行愈合。b...continued on in source document...",

"id": "210f98f0-d656-4c0f-b3d5-d617f6398eca",

"url": "file://C:\Users\XX\AppData\Roaming\anythingllm-desktop\storage\hotdir\新建 DOCX 文档.docx",

"title": "新建 DOCX 文档.docx",

"docAuthor": "no author found",

"description": "No description found.",

"docSource": "pdf file uploaded by the user.",

"chunkSource": "localfile://C:\Users\XX\Desktop\新建 DOCX 文档.docx",

"published": "2025/2/7 13:00:52",

"wordCount": 126,

"token_count_estimate": 3870

}

],

"metrics": {

"prompt_tokens": 431,

"completion_tokens": 326,

"total_tokens": 757,

"outputTps": 63.178294573643406,

"duration": 5.16

}

}

In this way we have completed the test of calling AnythingLLM interface. According to this interface you can implement your own business logic, below we combine the WeChat BOT to realize the knowledge base robot.

NGC Modifications

The NGCBOT program requires that theApiServerAiServerAiDialogue.pyAdd a call to the scriptAnythingLLMThe logic of the

Here I'll just put the code.

def getAnythingLLM(self, content, messages):

"""

本地 AnythingLLM 模型

:param content: 对话内容

:param messages: 消息列表

:return:

"""

op(f'[*]: 正在调用本地AnythingLLM对话接口... ...')

messages.append({"role": "user", "content": f'{content}'})

data = {

"model": self.anythingLLMConfig.get('anythingllmModel'),

"message": content

}

headers = {

"Content-Type": "application/json",

"Authorization": self.anythingLLMConfig.get('anythingllmKey')

}

try:

resp = requests.post(

url=self.anythingLLMConfig.get('anythingllmApi').format(workspace=self.anythingLLMConfig.get('workspace')),

headers=headers,

json=data,

timeout=300

)

resp.encoding = 'utf-8'

json_data = resp.json()

assistant_content = json_data['textResponse']

if "</think>" in assistant_content:

assistant_content = assistant_content.split("</think>")[1].strip()

messages.append({"role": "assistant", "content": f"{assistant_content}"})

if len(messages) == 21:

del messages[1]

del messages[2]

return assistant_content, messages

except Exception as e:

op(f'[-]: 本地AnythingLLM对话接口出现错误, 错误信息: {e}')

return None, [{"role": "system", "content": f'{self.systemAiRole}'}]

Add theanythingLLMConfig

self.anythingLLMConfig = {

'anythingllmApi': configData['apiServer']['aiConfig']['AnythingLLM']['AnythingllmApi'],

'anythingllmKey': configData['apiServer']['aiConfig']['AnythingLLM']['AnythingllmKey'],

'anythingllmModel': configData['apiServer']['aiConfig']['AnythingLLM']['AnythingllmModel'],

'workspace': configData['apiServer']['aiConfig']['AnythingLLM']['workspace']

}

Integration pack acquisition

Tootsie Labs - Windowns Edition

Quark:

https://pan.quark.cn/s/8d1293227cf9

Baidu.

https://pan.baidu.com/s/1wx8LmbY2XBaJAAJvmGK06g?pwd=8d5m

© Copyright notes

Article copyright AI Sharing Circle All, please do not reproduce without permission.

Related posts

No comments...