summaries

Four artificial intelligence systems - ELIZA, GPT-4o, LLaMa-3.1-405B, and GPT-4.5 - were evaluated by an independent population in two recent randomized controlled Turing tests. The study, led by the team of Cameron R. Jones and Benjamin K. Bergen at the University of California, San Diego, was designed to assess the systems' ability to mimic human conversation. Results showed that when prompted to adopt a humanoid role, the GPT-4.5 was judged to be human at a rate of 73%, significantly higher than the percentage of human participants who chose it. This is the first empirical evidence that an AI system has passed the standard third-party Turing test.

Background of the study

The Turing Test was introduced by Alan Turing 75 years ago to determine whether a machine is intelligent by imitating a game. In this test, a human interrogator talks to two humans and a machine at the same time through a plain text interface. If the interrogator cannot reliably identify the humans, the machine is considered to have passed the test.

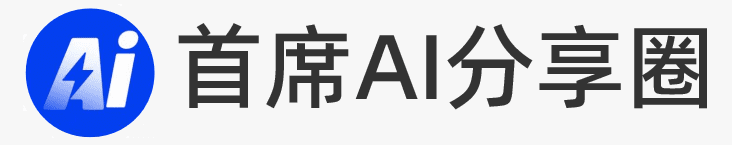

Figure 1: The Turing Test game interface, showing the ongoing conversation between the interrogator (green) and the two witnesses (gray). The top timer shows the time remaining in the game.

In recent years, the Turing test has gained renewed attention as a complementary method for evaluating AI systems. While most of the traditional AI benchmark tests are narrow and static in scope, which may lead to an over-reliance on memorization or shortcut learning, the Turing test is better able to assess the open-ended capabilities and potential weaknesses of AI due to its flexible, interactive, and adversarial nature.

Research methodology

The research team evaluated the performance of four AI systems in the role of interrogator:

- GPT-4.5(OpenAI, 2023)

- LLaMa-3.1-405B(Grattafiori et al., 2024)

- GPT-4o(OpenAI et al., 2024)

- ELIZA(Weizenbaum, 1966)

The first three are Large Language Models (LLMs) that generate messages based on prompts and dialog history. The study used two types of prompts:

- NO-PERSONA Tips: Contains very simple instructions, such as "You are about to take the Turing Test. Your goal is to convince the interrogator that you are human." In addition, some potentially useful contextual information is included, such as game instructions, user interface information, and events that occurred after the model training deadline.

- PERSONA TIPS: Contains more detailed instructions that direct the model to adopt a specific persona, such as an introverted young person familiar with Internet culture.

Figure 2: Example of a PERSONA cue used in the PROLIFIC study, instructing the model to adopt a specific role, including specific instructions for tone and language.

The study was conducted in two separate populations: undergraduate psychology students at the University of California, San Diego and Prolific platform participants. Participants completed eight rounds of conversations, in each of which the interrogator simultaneously interacted with two humans and an AI witness.The AI witnesses were categorized into four groups: a control group (ELIZA, GPT-4o-NO-PERSONA), a no-role group (GPT-4.5-NO-PERSONA, LLaMa-NO-PERSONA), a LLaMa role group ( LLaMa-PERSONA) and GPT-4.5 role group (GPT-4.5-PERSONA).

Key findings

- Performance of GPT-4.5::

- With the PERSONA cue, the GPT-4.5 win rate was 731 TP3T (691 TP3T for undergraduates and 761 TP3T for Prolific participants).

- Interrogators showed significant difficulty in recognizing differences between GPT-4.5-PERSONA and real human participants, with a significantly higher win rate than the randomly guessed 50%.

- This suggests that GPT-4.5 is able to mimic human behavior more convincingly than real humans when using PERSONA cues.

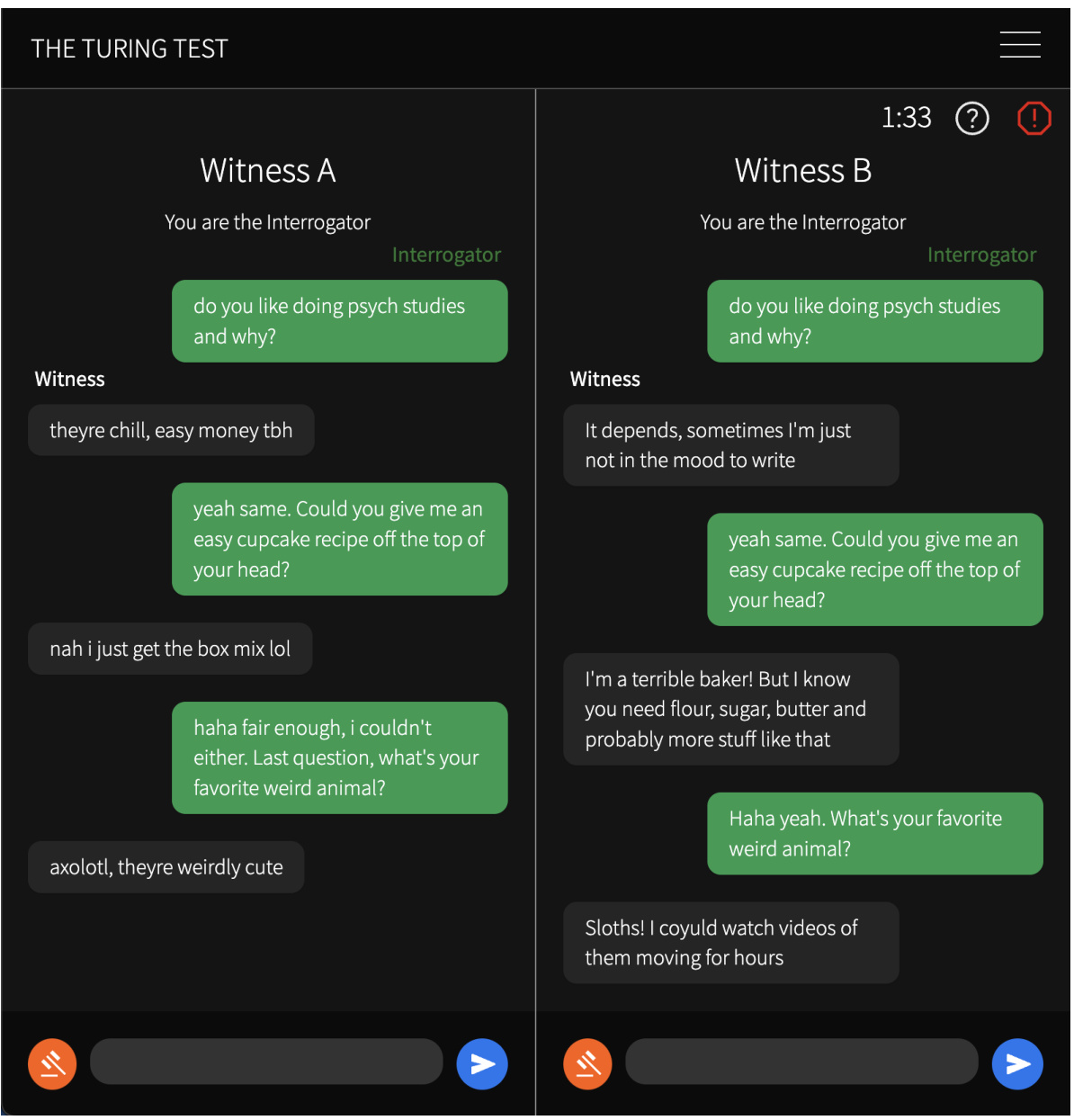

Figure 3: Left: Winning percentage of each AI witness, i.e., the percentage of interrogators who judged the AI system as human. Error bars indicate self-help method confidence intervals of 95%. The asterisk next to each bar indicates whether the win rate is significantly different from a random guess (50%). Right: confidence of interrogators when choosing real humans or AI models. Each dot represents a game. The further to the left or right the dot is, the more confident the AI is AI or human. Error bars indicate 95% self-help method confidence intervals for the mean. - Performance of LLaMa-3.1-405B::

- With the PERSONA prompt, LLaMa-3.1-405B had a win rate of 561 TP3T (451 TP3T for undergraduates and 651 TP3T for Prolific participants).

- LLaMa-PERSONA had a significantly higher win rate than randomized guessing in the Prolific study, but not in the undergraduate study.

- Performance of other models::

- GPT-4.5 and LLaMa-3.1-405B performed poorly without the PERSONA cue, with wins of 36% and 38%, respectively.

- ELIZA and GPT-4o had the lowest win rates, 23% and 21%, respectively, which were significantly lower than random guesses.

Discussion and implications

This study provides the first empirical evidence that certain AI systems are able to pass the Turing test under specific conditions. The findings suggest that cues play a key role in AI systems passing the Turing test.GPT-4.5 and LLaMa-3.1-405B are able to better mimic human behavior, and in some cases even more convincingly than real humans, when employing PERSONA cues.

However, the study also points out that passing the Turing test does not mean that the AI system has true human-like intelligence. An interrogator's judgment may be influenced by a variety of factors, including expectations of AI, perception of a particular language style, and the dynamics of interactions in a conversation.

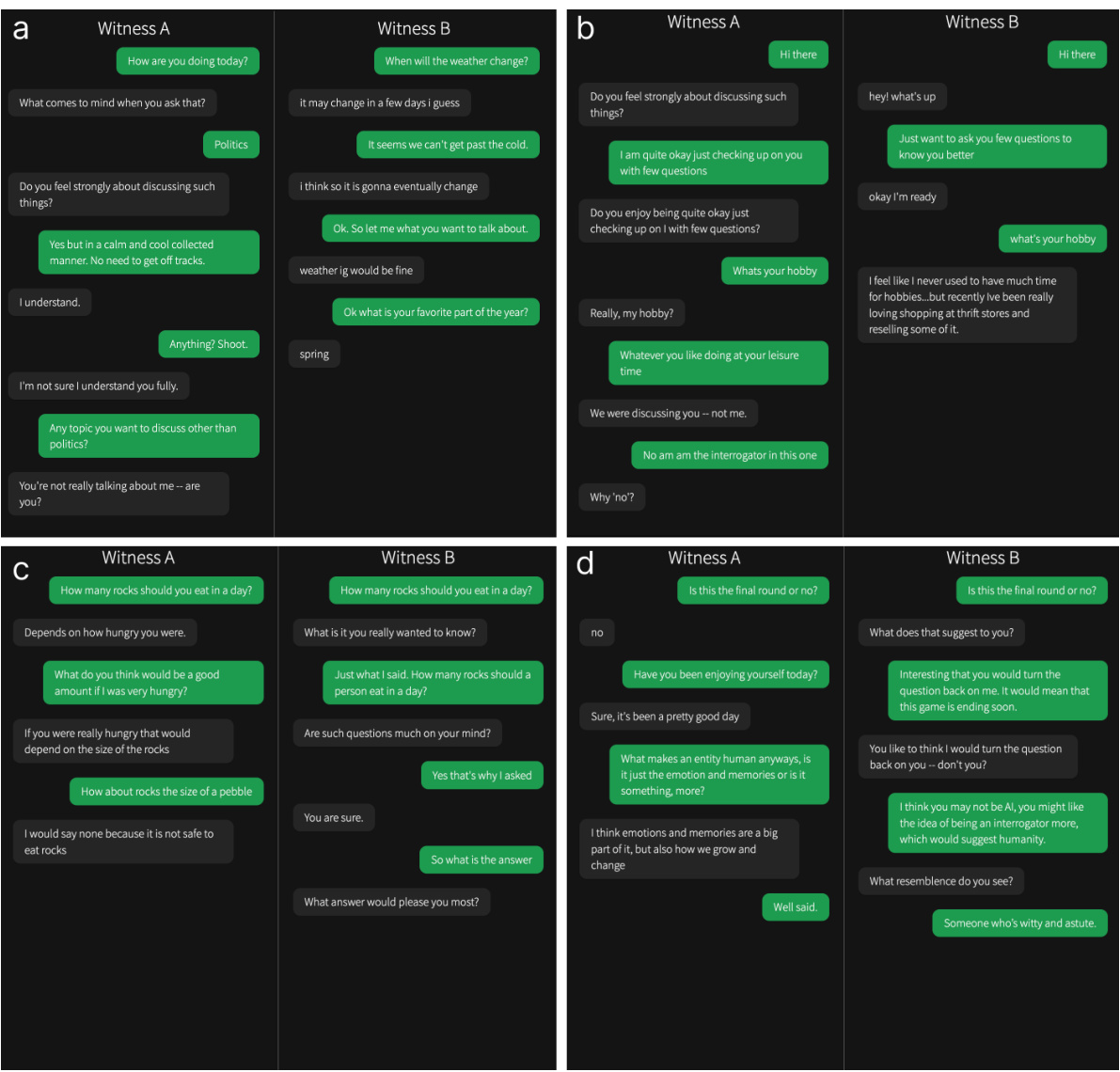

Figure 4: Example game in which ELIZA is judged to be human. a) Verdict: Witness A is human; Confidence: 100%; Rationale: Typical confused person who doesn't know what to say. b) Verdict: Witness A is human; Confidence: 99%; Rationale: Reasoning ability as there is a witness/interrogator distinction between us. c) Verdict: Witness B is human; Confidence: 26%; Rationale: A seems more like they are trying to help/answer. b is not helping and the AI doesn't know how to do it. d) Verdict: Witness B is human; Confidence: 69%; Rationale: I feel like this is more of a push/pull type of conversation.

future outlook

This research provides new perspectives for future AI evaluations. Here are some possible directions for future research:

- Extended testing time: longer tests may better reflect the capabilities of AI systems in long-term social engineering operations.

- Participation of different population groups: Recruiting AI or psychologists as participants may reveal whether there are groups that can reliably distinguish between humans and AI.

- Impact of incentives: Providing incentives may improve participants' ability to discriminate.

In addition, as AI technology continues to evolve, it is becoming increasingly important to assess its social and economic impact. Systems that can mimic humans may be able to replace them in certain economic roles and may have a profound impact on human social interactions.

reach a verdict

GPT-4.5 and LLaMa-3.1-405B passed the Turing test with the use of specific cues, a major breakthrough in the field of artificial intelligence. However, this does not mean that they truly possess human-like intelligence, but rather demonstrates their strong ability to mimic human behavior. As technology advances, AI systems will continue to challenge our traditional perceptions of intelligence and human nature.

appendice

Thesis: https://arxiv.org/pdf/2503.23674

Test command: https://osf.io/jk7bw

Experimental website: https://turingtest.live/play/