General Introduction

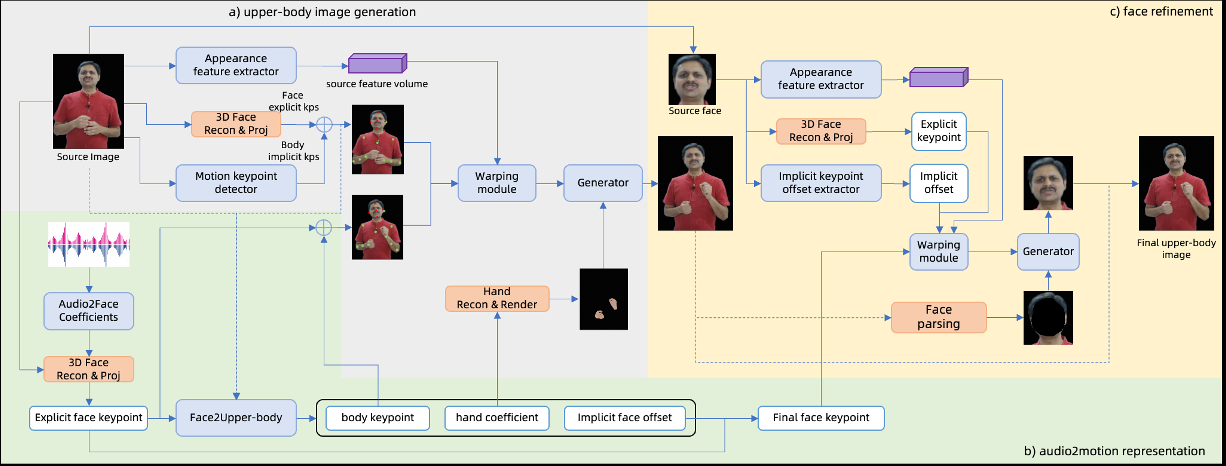

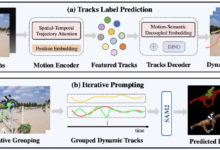

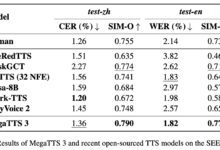

ChatAnyone is an innovative project developed by the HumanAIGC team. It utilizes artificial intelligence technology to generate a digital human portrait video with upper body movements from a single photo and audio input. Based on a hierarchical motion diffusion model, the project generates head movements, gestures, and expressions suitable for presenting avatars or animating digital people.ChatAnyone features efficient generation, supporting 512×768 resolution and 30 frames per second video output. The project is currently showing technical details on GitHub, but is not yet fully open source, attracting the attention of many users interested in digital human generation technology.

Function List

- Photo Generation Video: Generate digital human videos with upper body movements from a single photo and audio input.

- motion control: Support for generating natural movements of the head, gestures and expressions.

- audio synchronization: Lip movements are matched to the audio to enhance realism.

- High performance: Supports 512×768 resolution at 30 frames per second on the 4090 GPU.

- Technology Showcase: Share the results via a GitHub page for users to learn and explore.

Using Help

ChatAnyone is currently a technology demonstration project and is not fully open source, so it cannot be directly downloaded or installed. The following content is based on official information and describes in detail its functionality and operating logic to help users understand the project and look forward to possible open use in the future.

Main Functions

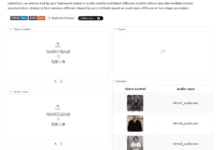

1. Generating videos from photos

- operating logic: The user provides a portrait photo and a piece of audio (e.g., a recording of speaking or singing), and the system generates a video of the digital person with upper body movements. The video includes movements such as head turns and gestures.

- effect: Output video resolution up to 512 x 768 with a frame rate of 30 frames per second. Digital human movements are matched to the audio tempo, which is suitable for displaying virtual images.

- Usage: The functionality is currently known through official showcase videos or documentation, and a beta version may be opened in the future.

2. Motion control

- operating logic: The system generates natural upper body movements based on audio, including head and hand dynamics. Users can understand the range of motion through examples.

- effect: The generated digital person can present different movement styles, such as nodding and gesture changes, to enhance expressiveness.

- Usage: This feature is in the demonstration phase, and users can see how it works via the GitHub page.

3. Audio synchronization

- operating logic: After inputting clear audio, the system generates lip movements that correspond to the rhythm of the sound.

- effect: Lips are highly synchronized with audio for virtual anchors or animated presentations.

- Usage: Currently experienced through official sample videos, user testing may be supported in the future.

How to get more information

- Visit the official page: Go to

https://github.com/HumanAIGC/chat-anyone, view the project description and presentation video. - Follow Updates: The project is not yet open source, but the team may release code or tools in the future. It is recommended to check the GitHub repository regularly.

- Contact the teamFor more information, please leave a message on GitHub or find official contact information.

caveat

- ChatAnyone is currently a technology demonstration project and is not available for direct use.

- Generation requires high-performance hardware (e.g. 4090 GPUs), which is difficult for the average user to experience locally.

- The project may be open-sourced in the future, and a more detailed guide will be available at that time.

application scenario

- Virtual Image Presentation

Users can generate videos of digital people with photos to show personalized virtual images. - Animation content production

Creators can utilize the generated video of a half-figure digital person to create short films or presentation content. - Technical studies

Researchers can learn about audio-driven digital human generation techniques through the project.

QA

- Can ChatAnyone chat in real time?

Not currently. It focuses on generating videos from photos and audio, not a live chat tool. - What types of photos are supported?

The official presentation is based on portrait photographs, and specific requirements can be found in future documents. - Is the video commercially available?

Currently there is no explicit license, need to wait for open source to view the agreement.